One of the features introduced in OpenShift Origin 1.5 and OpenShift Container Platform 3.5 is support for multicast for containers in the same project. When multicast is enabled for a project, containers can communicate with each other, but more importantly, containers in other projects do not see that communication.

Multicast allows you to send packets to unknown recipients who can listen for packets on a specific address, unlike regular UDP which sends packets to single well-known destination. It’s a bit like the topic-subscriber pattern used in message queues.

Why Multicast?

Many tools have support for Kubernetes nowadays, that is, they’re able to integrate with the Kubernetes API and use it for discovery (for example, the famous jGroups library used in many Java projects). However, that makes the application dependent on the Kubernetes APIs and requires the user to manage permissions through a service account, which may be considered another configuration “hassle.” However, the benefit is that it’s manageable by the user without the need of the cluster operator and provides push notifications.

Another option would be to use “headless” services provided by Kubernetes. This is very simple. Compared to normal Kubernetes, headless service does not work as a load balancer, but instead allows the user to query the backends using DNS. The DNS response for the A records with headless service will contain IP addresses of all the pods that act as endpoints of that service, instead of the IP address of the service itself. It’s very easy for the user to set up without the need of cluster operators, but does not provide push information. Instead, the user has to query the DNS and manage the peers manually in intervals.

Another option would be to use something like etcd, zookeeper, or consul to manage the peers, but that adds another component into the system design, which may not always be possible.

The benefits of multicast are that it allows push notifications, does not require the user to depend on specific APIs, and is easy to use for push notifications. However, multicast currently requires operations to annotate the project. But as you’ll see in this post, that’s very easy to do in OpenShift.

Enabling Multicast

As mentioned, operations must enable multicast by annotating the project that’s allowed to use multicast:

oc annotate netnamespace admin netnamespace.network.openshift.io/multicast-enabled="true"

The the other requirement is that the ovs-multitenant SDN plugin has to be used to manage the networking layer of the cluster. Once these two requirements are met, multicast “just works” in your project.

OpenShift also provides the ovs-networkpolicy plugin, which provides support for multicast. But it should only be used for light workload at this time. It’s still in technical preview mode and should not be used in production environments.

Using Multicast

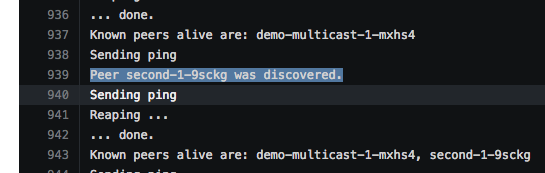

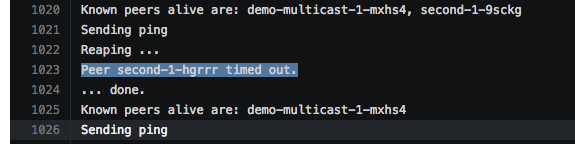

To test multicast, I have created a simple Go application that reads the HOSTNAME environment variable (which is the name of the pod) and broadcasts the name every 2 seconds. At the same time, the application listens for broadcasts from other instances and keeps a map of known instances (hostnames) and the time when the last ping (broadcast from that instance) was received. Plus there is a simple reaper in the background (called every 3 seconds) that marks any instance that has not sent a ping for the last 5 seconds as dead.

For simplicity, the application is packaged as a container and published at DockerHub. To deploy the application, simply run the oc new-app command:

oc new-app mjelen/demo-multicast --name=first

Now you have the application, it’s sending its hostname, but does not see any peers because there aren’t any. To discover peers, deploy another instance of the application by running the command again with different name:

oc new-app mjelen/demo-multicast --name=second

And watch the logs either in console (oc logs) or in the web console:

To create instances, you do not have to create new deployments of the application, because scaling the deployment works exactly the same. That is, it doesn't matter if the containers are in the same or different pods, the communication is isolated on the project level. Scale the deployment to add more instances:

oc scale dc second --replicas=2

And finally scale down again to see the dead instances being reaped:

oc scale dc second --replicas=1

And that’s it. As you can see, it’s very easy to get multicast up and running inside OpenShift.

Conclusion

It’s easy to use multicast by using the ovs-multitenant SDN plugin and enabling this feature by annotating the project.

Side Note

While testing this feature, when I used a single-node all-in-one cluster I ran into a problem; the multicast did not work. If you encounter this problem, either move to multi-node cluster or check to see if the fixes have already been released.

About the author

More like this

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit