Introduction

The majority of applications deployed on Red Hat OpenShift have some endpoints exposed to the outside of the cluster via a reverse proxy, normally the router (which is implemented with HAProxy).

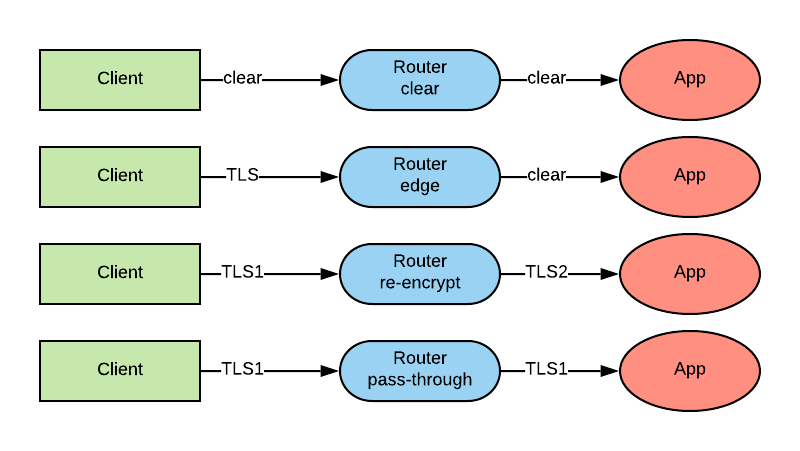

When using a router, the following options are possible:

- Clear text: the connection is always unencrypted.

- Edge: the connection is encrypted from the client to the reverse proxy, but unencrypted from the reverse proxy to the pod.

- Re-encrypt: the encrypted connection is terminated at the reverse proxy, but then re-encrypted.

- Passthrough: the connection is not encrypted by the reverse proxy. The reverse proxy uses the Server Name Indication (SNI) field to determine to which backend to forward the connection, but in every other respects it acts as a Layer 4 load balancer.

In this article, we will examine end-to-end encryption options. The use of end-to-end encryption eliminates the first two ways of managing a proxied connection as outlined previously and leaves us with re-encrypt and passthrough as the remaining options.

I believe there is value in ensuring that our connections are always fully encrypted and that we should design the security of our systems based on the Zero Trust Networks principles (see also BeyondCorp - the Google-provided reference architecture of the zero trust network principles). End-to-end encryption is one of the cornerstone principles of Zero Trust Networks.

We also want the ability to connect to our pods directly from other pods. This feature is important because we don’t want to make any assumption about the location of the consumers of our services (inside or outside of the cluster). This way we don’t have to change our architecture if, for example, a consumer moves from outside to inside the cluster.

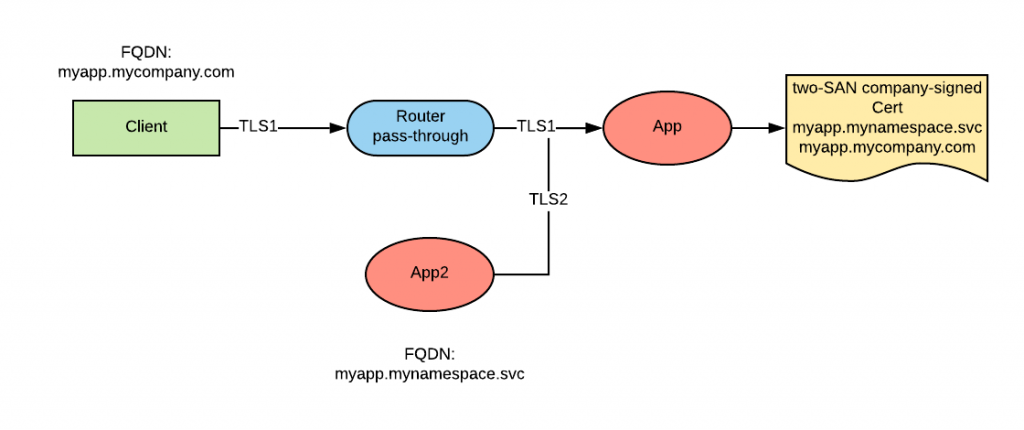

This complicates the solution because normally a service will have two different names ( internal and external) as shown in the following diagram:

Because the certificate validation process ensures that the Subject Alternative Name (SAN) of the certificate presented by the service corresponds to the Fully Qualified Domain Name (FQDN) that was used to discover the service IP, an issue arises where the proper certificate must be presented depending on where the connection originates from.

Another feature that is paramount to achieving high-speed of delivery is full automation when requesting and distributing certificates. We want to operate in an environment in which the development teams can self-provision their infrastructure, including certificates.

In summary, the following are the requirements:

- End-to-end encryption.

- Support for both internal and external communication.

- Full automation when requesting and distributing certificates.

In this article, we will describe a few possible approaches to satisfy these requirements.

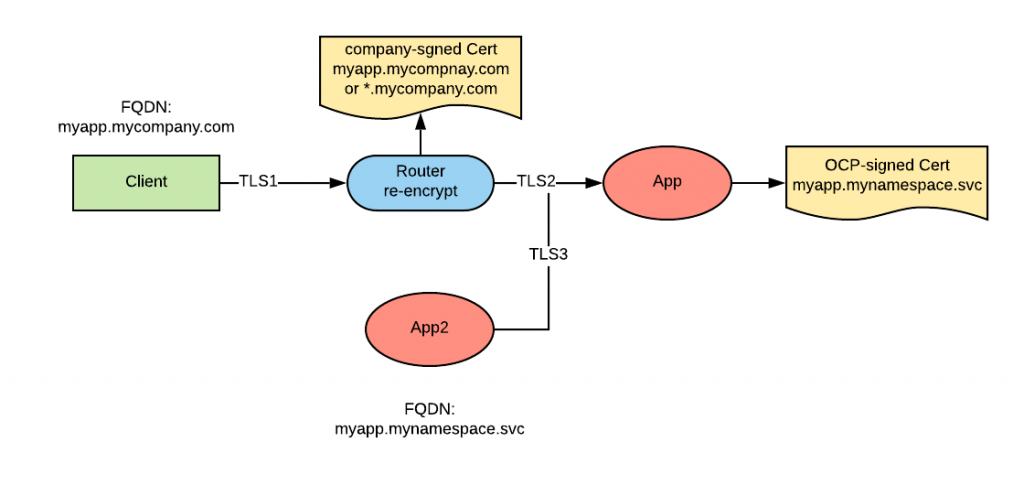

Re-encrypt Routes

With re-encrypt routes, we can set up the configuration as depicted in the following diagram:

The router presents a certificate that can satisfy the consumer using the external FQDN, while the application presents a certificate that can satisfy the consumer using the internal FQDN.

Because the router re-encrypts the connection, it acts as an internal consumer and it will trust the internal certificate presented by the application.

To dynamically create and distribute the internal certificate, we can use the service serving certificate secret feature of OpenShift. Certificates generated by with this feature are signed by the application-dedicated OpenShift PKI.

On the route, a company-signed certificate needs to be exposed. To do so, we have the option of using a route-specific certificate, or a wildcard certificate configured within the router.

To provide self-serviced route-specific certificates, we can use a dedicated operator which is designed to perform this function (cert-operator is an example of such an operator that supports a few Certificate Authority (CA) platform integrations).

Wildcard certificates is another option for implicit automation. Wildcard certificates are deprecated by the Internet Engineering Task Force (IETF), which now encourages using multiple-SAN certificates or designing applications that are smart enough to present the proper certificate by analysing the SNI field, which carries the information of the host the client is attempting to connect to. Because of this deprecation, wildcard certificates should be seen as a temporary solution and used only in non-production environments.

Passthrough Route

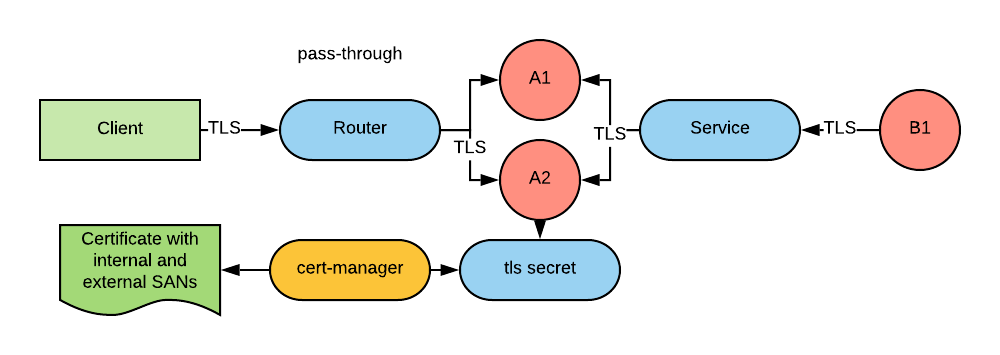

With a passthrough route, the architecture is depicted as follows:

Certificates for the application can be generated by an operator, for example: Cert-manager.

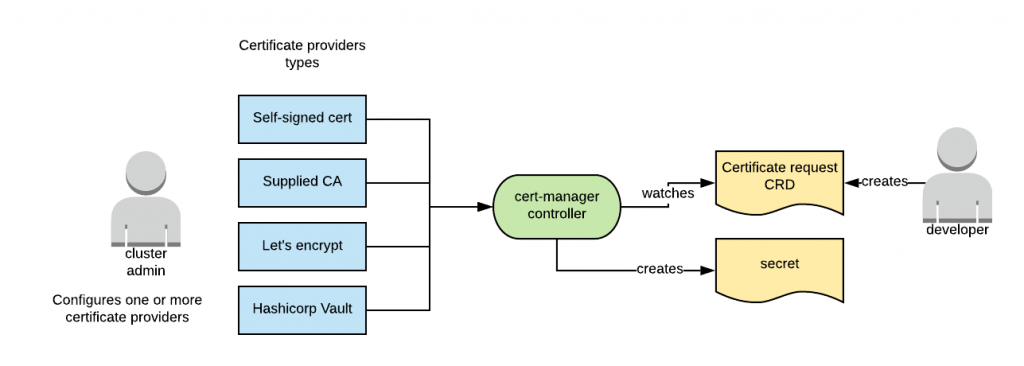

The upstream community has been coalescing around this solution, so much so that Kube-lego and Kube-cert-manager have been discontinued in favor of cert-manager. Cert-manager is a controller that creates secrets containing certificates based on CRD requesting certificates.

Based on a plug-in architecture, cert-manager can support multiple CA implementations. Currently, it supports externally supplied CAs (either RootCAs and SubCAs), integration with systems that support the Automated Certificate Management Environment (ACME) protocol (such as for example Let’s Encrypt) and integration with Hashicorp Vault. This diagram shows cert-manager architecture:

Because of the plug-in architecture, it is possible to add more implementations.

To support the passthrough option, we can configure cert-manager to generate a certificate with two SANs.

A cert-manager Certificate Custom Resource Definition (CRD) for the above configuration looks as follows:

apiVersion: certmanager.k8s.io/v1alpha1

kind: Certificate

metadata:

name: mycert

spec:

secretName: certs

dnsNames:

- myapp.<external-domain>

- myapp.<namespace>.svc

issuerRef:

name: myCA-issuer

kind: ClusterIssuer

This example, contains a certificate request for a certificate with two SANs (one for external connections coming via a passthrough route, and one for internal connections via the service). The certificate will be issued by the myCA-issuer (which is a reference to an instance of one of the supported certificate issuers previously configured by a cluster admin). The resulting deployment appears as follows:

A Helm chart is available at this location to automate the deployment of cert-manager in OpenShift.

Istio Ingress-Gateway and mTLS

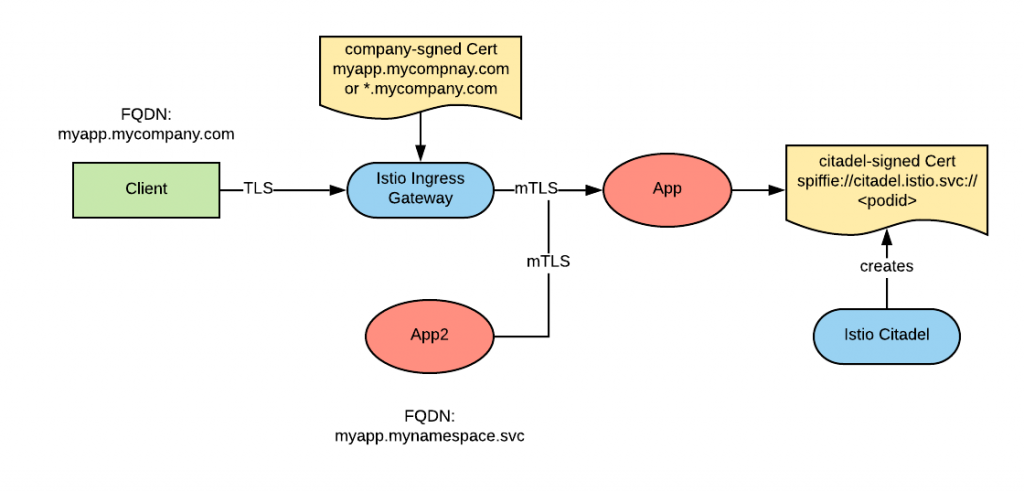

OpenShift Service Mesh (whose corresponding upstream project is Istio) includes its own reverse proxy called Ingress-Gateway, implemented by Envoy.

Mutual TLS authentication (mTLS) involves client and server authentication with each other as opposed to only the client authenticating the server. Because the server can authenticate the client, it is possible to also perform some level of authorization on the identity presented by the client.

Mutual TLS is generally considered difficult to implement because it adds the onus of distributing certificates to the client, a challenge that is even more complex than distributing certificates to the server, since the clients may not all be known in advance.

Citadel is the component in Istio that manages certificates. Istio certificates are based on the SPIFFE specification, and are more suitable to model workload identities against. Identities in SPIFFE are referenced with an URI in the SAN field (the SPIFFIE identity URI looks like this: spiffe://trust-domain/path).

Citadel manages its own PKI and can be initialized with a RootCA or with an externally supplied one. We recommend using a RootCA.

With the Ingress-gateway and citadel, the following architecture can be built:

Within Istio, the ingress-gateway always operates in re-encrypt mode. A company-signed certificate must be supplied to the Ingress-Gateway. We can use cert-manager to accomplish this because the Ingress Gateway consumes certificates from secrets.

Internal connections in the mesh can be configured to use mTLS. Certificates to support mTLS connections are automatically generated by Citadel and injected into the application pods. mTLS was not one of our initial requirements, but it is an optional feature and benefit since it allows us to create RBAC rules to specify which client can connect to our services.

Consideration on Ingresses

Ingress resources are Kubernetes-native resources that play the same role as OpenShift routes. In fact, if you create an Ingress resource in OpenShift, the router will recognize this object and configure HAproxy to make use of it.

So, in order to use Kubernetes-only resources, it would be nice to be able to recreate the configurations discussed above (the first two, in particular) with Ingress resources instead of routes.

This is not possible using the standard Ingress resources since, at the moment, Ingresses only support plain text and edge termination. Unfortunately, the development of the Ingress specification seem to have stalled in the last year.

Most Ingress controllers support implementation-specific extensions that allow for the configuration of re-encrypt and pass-through ingresses.

So, using implementation-specific extensions, it is certainly possible to implement the architecture discussed above.

Support for Java applications

Certificates are typically provided in Privacy-Enhanced Email (PEM) format and each of the controllers discussed above create certificates in this format.

Java applications expect to serve and validate certificates using keystores and truststores respectively, which store certificates in a different format.

See here for an approach to automatically convert PEM-formatted certificates to key- and trust- stores to make them available to Java applications.

Conclusion

This article provides several options to configure end-to-end encryption for your applications in a self-serviced way. This list of options is not exhaustive, instead it’s more intended to help jumpstart the conversation and help you find the ideal configuration for your requirements within your technology landscape.

About the author

Raffaele is a full-stack enterprise architect with 20+ years of experience. Raffaele started his career in Italy as a Java Architect then gradually moved to Integration Architect and then Enterprise Architect. Later he moved to the United States to eventually become an OpenShift Architect for Red Hat consulting services, acquiring, in the process, knowledge of the infrastructure side of IT.

Currently Raffaele covers a consulting position of cross-portfolio application architect with a focus on OpenShift. Most of his career Raffaele worked with large financial institutions allowing him to acquire an understanding of enterprise processes and security and compliance requirements of large enterprise customers.

Raffaele has become part of the CNCF TAG Storage and contributed to the Cloud Native Disaster Recovery whitepaper.

Recently Raffaele has been focusing on how to improve the developer experience by implementing internal development platforms (IDP).

More like this

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit