One of the key differentiators of Red Hat OpenShift as a Kubernetes distribution is the ability to build container images using the platform via first class APIs. This means there is no separate infrastructure or manual build processes required to create images that will be run on the platform. Instead, the same infrastructure can be used to produce the images and run them. For developers, this means one less barrier to getting their code deployed.

With OpenShift 4, we have significantly redesigned how this build infrastructure works. Before that sets off alarm bells, I should emphasize that for a consumer of the build APIs and resulting images, the experience is nearly identical. What has changed is what happens under the covers when a build is executed and source code is turned into a runnable image.

How Builds Used To Work (3.x)

In OpenShift 3.x the build implementation was entirely dependent on the presence of a docker daemon on the cluster node host machines. For the two most common build strategies (source-to-image and Dockerfile), the creation of the new image and the pushing of it to the target image registry was managed through interaction with the docker daemon.

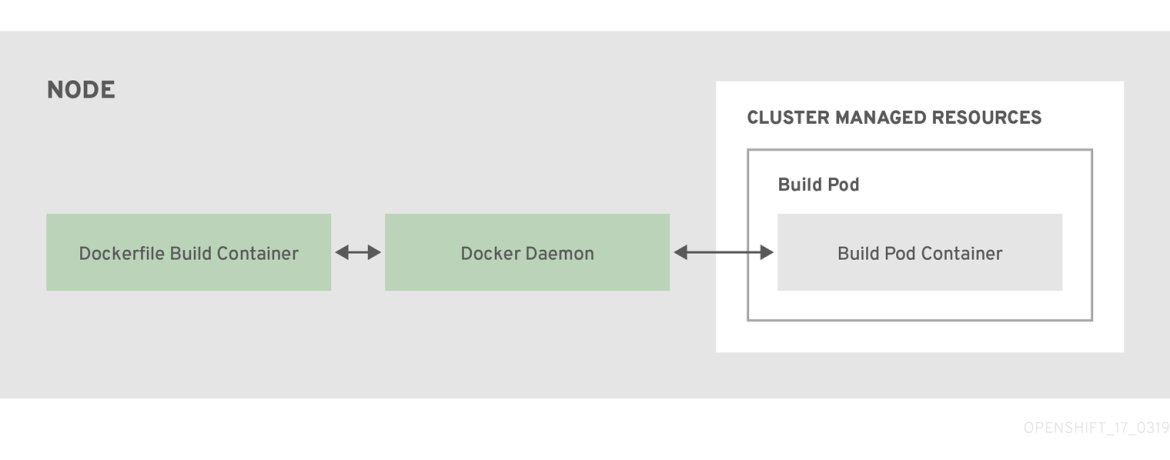

Dockerfile Builds

Docker builds were relatively simple. The OpenShift build logic spawned a privileged pod on the cluster. The pod mounted the docker socket from the node host, retrieved your source repository and other build inputs into a working directory inside the pod, and then invoked the equivalent of a “docker build” on that directory. The standard build logic would produce a new image and the OpenShift build logic would interact with the aforementioned daemon to tag and push it to the target location.

Figure 1: Components of a dockerfile build in OpenShift 3.x

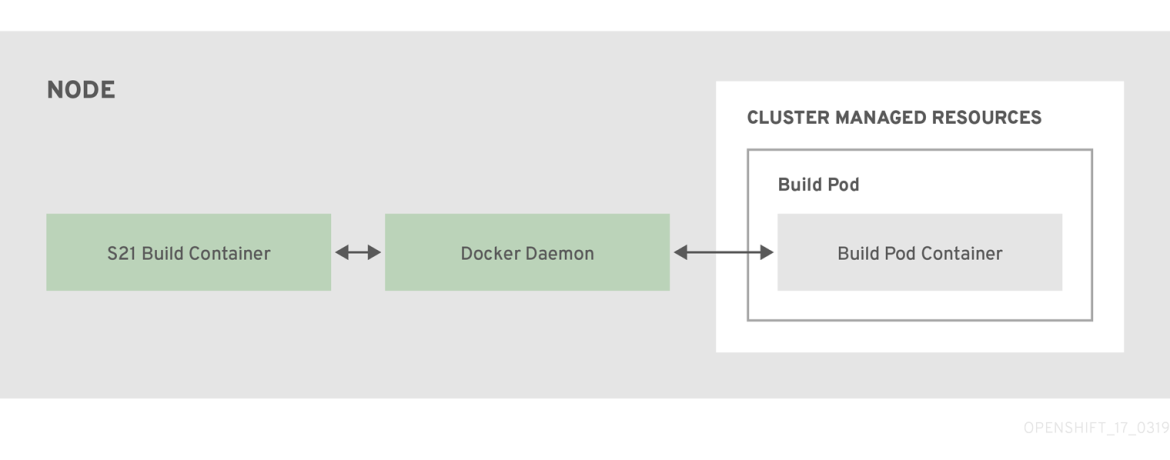

Source-To-Image Builds

Source-To-Image (S2I) builds were slightly more complicated. As with a docker build, a privileged build pod was created with the host’s Docker socket mounted, and the build inputs were pulled down and placed in a working directory inside the pod.

Next the S2I component used the docker socket to direct the daemon to launch a new container running the s2i-builder image (e.g. Python, Ruby, etc). The new container was started with an entrypoint that expected to read a tar file from stdin. After the container was started, the S2I logic would tar the working directory of inputs and stream it to the container’s stdin. The container then read the tar content, extracted it to a location within the container, and invoked the S2I builder image’s assemble script.

Once the assemble script executed its logic to construct the application within the container, S2I invoked the daemon to commit the current container state as a new image. Finally the new image was tagged and pushed using the daemon.

Figure 2: Components of an S2I build in OpenShift 3.x

Limitations

The OpenShift 3.x design had a few notable limitations:

- Dependence on a daemon. This meant that even if your cluster was configured to use CRI-O, you still needed to provide a docker daemon on the hosts for builds to work.

- Exposing the Docker socket to pods. Although we configure the build pods so that normal users cannot exec into them or run arbitrary logic in the pod itself, having the host’s Docker socket available inside a pod where someone could theoretically use it to run arbitrary containers on the host is not ideal from a security perspective.

- Unmanaged containers. The containers launched directly by the daemon at the direction of the build component were not under OpenShift and Kubernetes management as they were not a part of any pod. This made managing their resources and cleanup more complicated.

How We Improved It

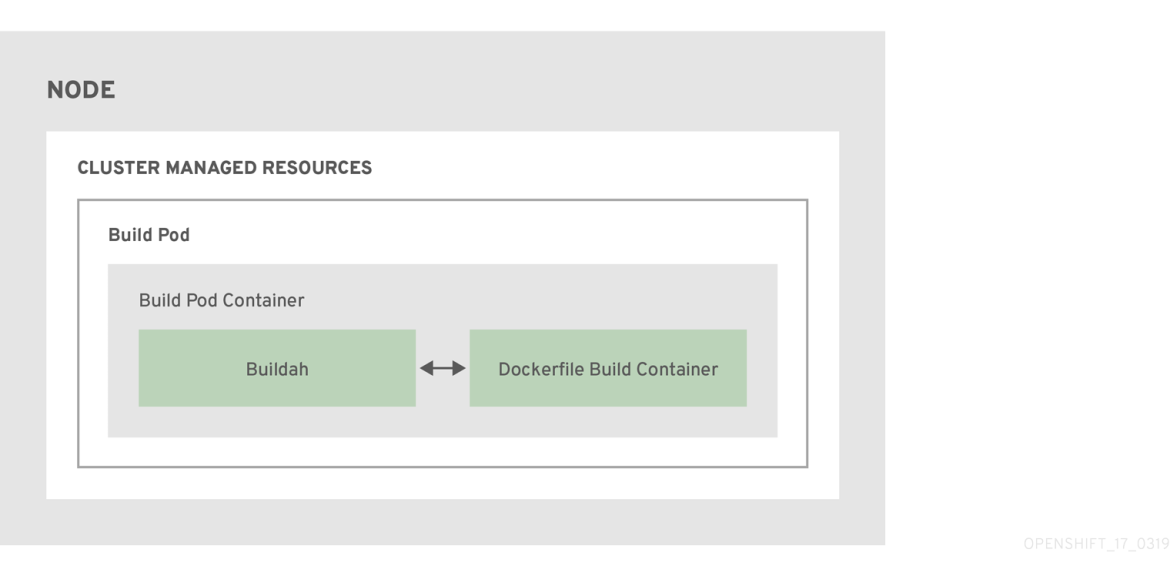

With OpenShift 4 we undertook a fundamental redesign of how images are built on the platform. Instead of relying on a daemon on the host to manage containers, image creation, and image pushing, we are leveraging Buildah running inside our build pods. This aligns with the general OpenShift 4 theme of making everything “just another pod.”

Figure 3: Components of S2I and Dockerfile builds in OpenShift 4

Dockerfile Builds

Images built from a user supplied Dockerfile behave nearly identically to OpenShift 3, with the key difference being that rather than invoke the docker daemon to build the image from the working directory, we invoke Buildah which is baked into the OpenShift builder image. Buildah also handles the tagging and pushing of the resulting image. This allowed us to remove the Docker socket mount in the build pod and the corresponding Docker daemon host dependency, thereby improving the security of the Dockerfile build process.

Source-To-Image Builds

Removing the Docker daemon dependency from S2I was a bit more complicated. S2I’s build flow was tightly coupled to the process of launching containers and committing running containers as new images. Rather than try to replicate that workflow using Buildah/Podman to manage running containers within a pod, we decided to map S2I’s workflow into a pattern we already understood well: Dockerfile builds.

What we needed to accomplish was:

- Start from the S2I builder image the user requested (e.g. Python, Ruby, etc) (FROM)

- Add the source code/other inputs the user provided (COPY)

- Execute the assemble script (RUN)

- Commit the new image

To that end, we created an updated version of S2I that can generate a Dockerfile instead of performing the entire image build itself. Here’s an example of the Dockerfile that S2I generates:

FROM registry.redhat.io/rhoar-nodejs/nodejs-10

USER root

# Copying in source code

COPY upload/src /tmp/src

# Change file ownership to the assemble user.

RUN chown -R 1001:0 /tmp/src

# Run assemble as non-root user

USER 1001

# Assemble script sourced from builder image based on user input or image metadata.

RUN /usr/libexec/s2i/assemble

# Run script sourced from builder image based on user input or image metadata.

CMD /usr/libexec/s2i/run

S2I also provided some other functionality around injecting environment variables, image labels, and allowing overriding of the assemble and run scripts, but those could easily be managed by including additional directives into the generated Dockerfile.

We then build the Dockerfile using the same flow as the Buildah based Dockerfile build described previously. Since the Dockerfile is generated by S2I and not provided by the user, and because the assemble script is run as a non-privileged user, we retain the S2I contract of limiting what a user can do within their S2I build.

Notable Impacts

There are a few subtleties as a result of this redesign that are worth noting:

- Subscription credentials are no longer provided from the host automatically. Since the host’s docker daemon is not running the build containers, subscription credentials from the host are unavailable to the build. This means that builds of Dockerfiles that need subscription credentials to install content will not have the host’s credentials automatically available. Users will need to provide credentials to the build via the secrets input mechanism. We are investigating a cluster-wide credential mechanism for builds to provide a better experience in the future.

- Custom builds cannot depend on a docker socket being available. If you previously used the custom build strategy and requested the docker socket be mounted from the host, this will no longer work as there is no docker socket on the host to mount. For this use case we recommend running Buildah inside your custom builder image.

- The image cache is no longer shared. When using the host’s docker daemon, images and layers that had been pulled to the host previously could be reused by a subsequent build on that same host. Since builds no longer have access to the host, each build must pull any layers it requires each time the build is run. This helps improve the security posture of builds by providing an additional level of isolation, however it will also increase the time required to execute a build. We will be looking to reintroduce a shared image cache in the future.

Conclusion

As a result of this work, we have a simplified set of build workflows (they are both Dockerfile builds at the core) that are not dependent on the node host having a specific container runtime available. Dockerfiles that built under OpenShift 3.x will continue to build under OpenShift 4.x and S2I builds will continue to function as well. The actual BuildConfig API is unchanged, so a BuildConfig from a v3.x cluster can be imported into a v4.x cluster and work without modification. We hope cluster administrators enjoy this improved abstraction between the build component and the cluster infrastructure.

OpenShift 4 is available today. You can try it at:

About the author

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit