Red Hat blog

Illustrated by: Mary Shakshober

The second workshop in our Customer Empathy Workshop series was held during KubeCon San Diego 2019 and was focused on multicluster management and GitOps. We collaborated with Red Hat OpenShift customers from six different companies ranging from finance to energy to manufacturing.

Over the course of 2.5 hours, we learned about our customers’ OpenShift environments, shared our design thinking strategy in tackling development challenges, and collaboratively brainstormed on how to improve the management of multiple clusters, potentially through a GitOps approach.

At the end of the workshop, we gained valuable insight into our customers’ unique challenges with multicluster management. This insight sparked collaboration—we partnered with users so that we, together, can improve the user experience. Here's how it all happened.

What we learned

During this workshop, we ran a few different hands-on activities to drive the discussion and guide our customers through a design thinking approach to problem-solving.

We focused on the first three steps: empathize, define, and ideate.

Empathize

Before diving in, we took a little time to get to know our customers better. Customers took turns sharing their roles, responsibilities, and how their infrastructure is currently set up. Then they shared where they hoped to take their environments in future.

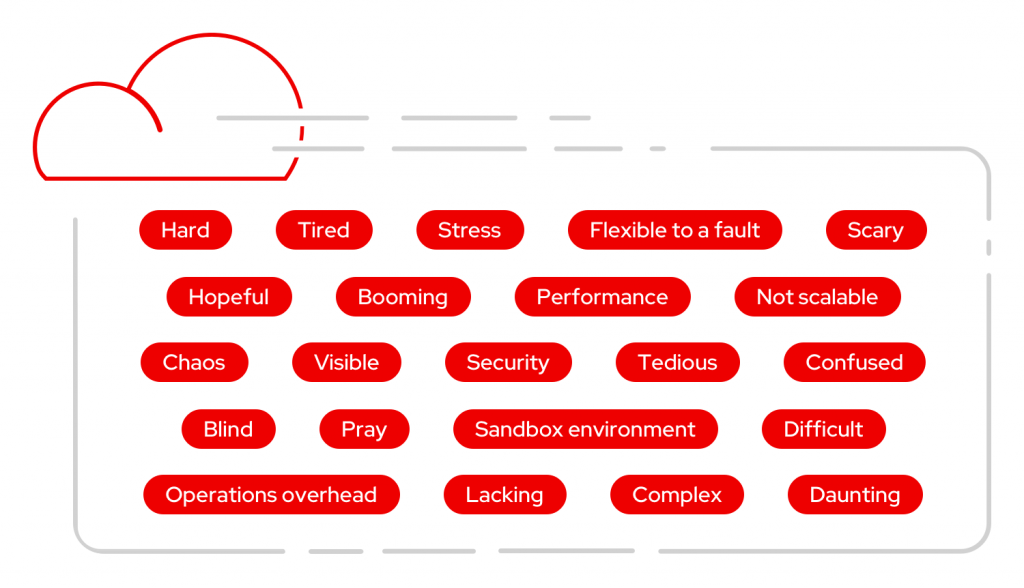

Next, we wanted to understand what kinds of emotions were evoked by the terms “multicluster management” and “GitOps.” So we asked participants to write down some reaction words. These ranged from “stress,” “complex,” and “chaos” to “hopeful,” “booming,” and “flexible.”

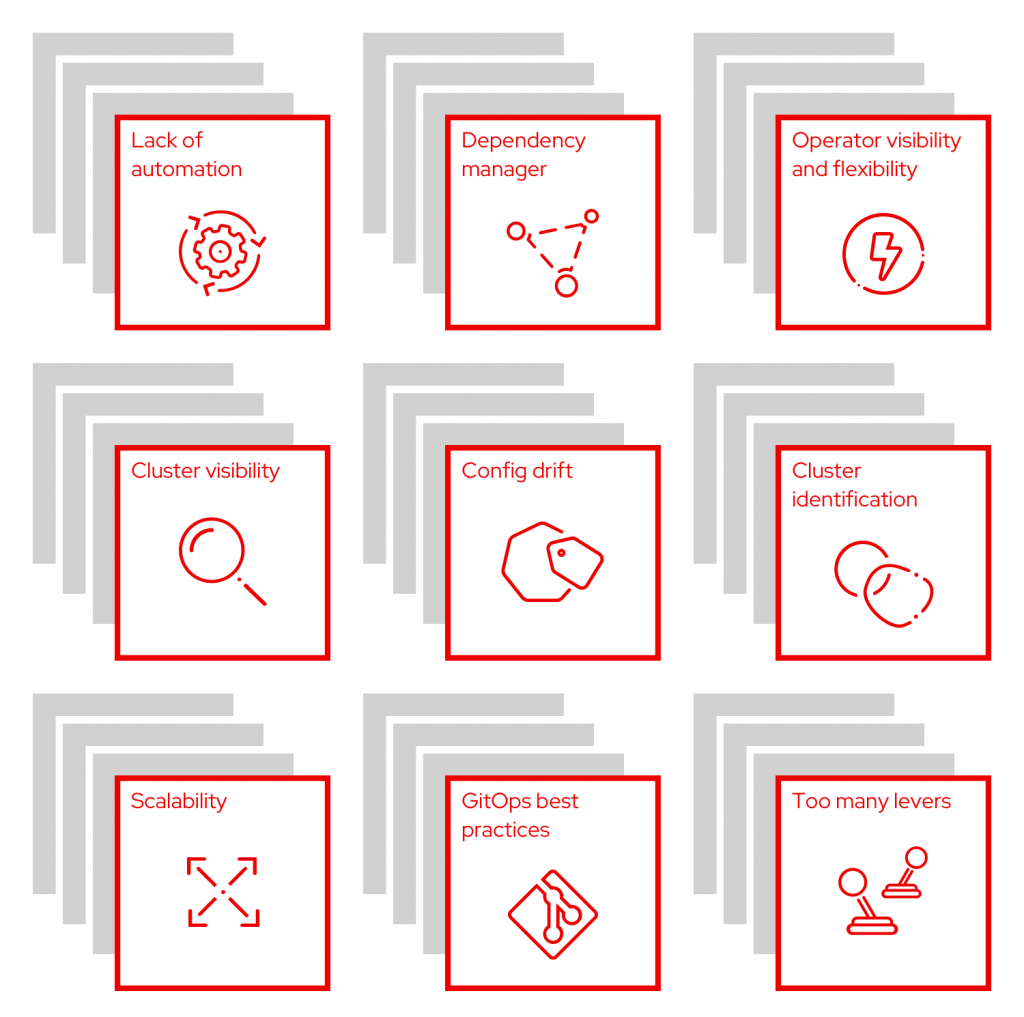

Next, we pulled out the Sharpies and sticky notes. Participants had the opportunity to brainstorm some of their pain points with managing multiple clusters and using GitOps. We reviewed each sticky note and grouped similar problems together. This allowed us to see the big picture as common themes emerged from both groups.

Here are some of those themes, along with paraphrased responses from customers:

- Lack of automation: “It’s hard to push config changes out to all my clusters.”

- Dependency manager: “It’s hard to keep track of cluster settings and services required by certain apps.”

- Operator visibility and flexibility: “As a user, I don't really understand what the Operator is doing–it is a black box to me. Sometimes I need to change things but the Operator prevents this. I need to balance ease of use with flexibility.”

- Cluster visibility: “There is a lack of alerts, which makes it hard to understand the state of the entire cluster. I find it even harder to get visibility from multiple clusters. Therefore, I am reactive rather than proactive.”

- Cluster identification: “I often switch between a lot of clusters, making it easy to lose context on which cluster I am currently working on.”

- Configuration drift: “I need to take immediate action and GitOps takes too long. Sometimes I forget to retrofit the changes back, or I don't have GitOps in place and forget to apply the changes to all the clusters I manage. It’s easy to get configuration drift with quota management and RBAC management across clusters.”

- Scalability: “I’ve never had to manage so many clusters before. I used to only have one big cluster, but now we might have hundreds.”

- GitOps best practices: “I don't know where to start with GitOps; there are so many choices/options and technology is moving too fast. How can I bring all the tools together to create a solution for my problem?”

- Too many levers: “There are so many flags you can set in Kubernetes, making it really easy to mess things up.”

Define

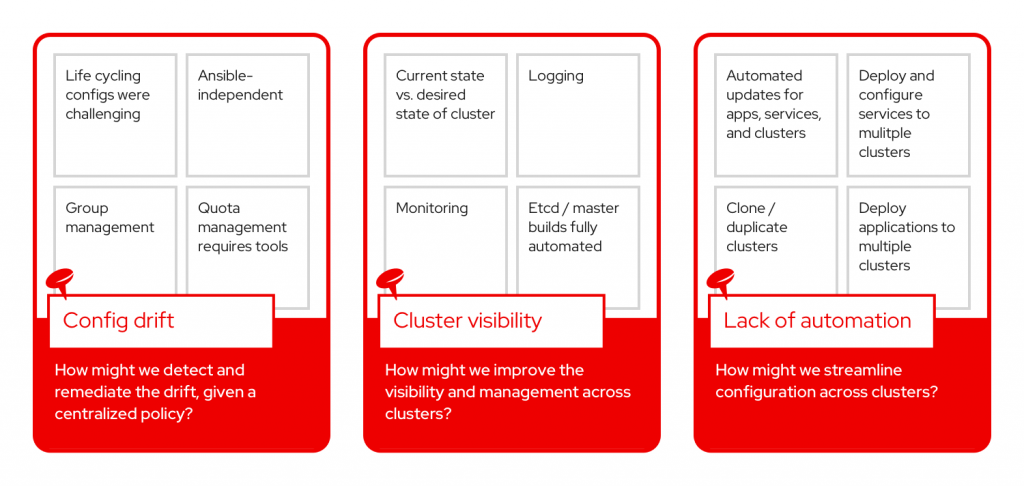

After identifying common pain points and converging on major themes, we set out to highlight key problems that we could focus on. Each group selected a theme from the previous exercise and rewrote it as a problem statement:

- How might we detect and remediate the drift, given a centralized policy?

- How might we improve the visibility and management across clusters?

- How might we streamline configuration across clusters?

Ideate

The ideation portion of our activities allowed participants to offer solutions for the problem statement and encourage other members of the group to add on to the idea or provide a different new idea. This is when the creativity came out and the collaborative nature of design thinking really shone. Participants then had the opportunity to share and discuss their problem(s) and potential solutions with the room. Finally, every participant voted on the solutions they most wanted to see implemented for each problem statement, which will help the OpenShift team prioritize new features in the console.

Problem statement: How might we detect and remediate the drift, given a centralized policy?

- Best practices or place to start with GitOps

- Detection of manual changes

- Export existing cluster config

- Visualize config/policy to customer

- Configs for different environments

- Dynamic policy

Problem statement: How might we improve the visibility and management across clusters?

- Single pane of glass

- Self healing

- Add replication of clusters

Problem statement: How might we streamline configuration across clusters?

- Central cluster management, hub and spoke

- Notify users when image has been patched

Below are the highest-voted solutions to each problem statement.

What’s next

There's so much more to come. In the next few weeks, we'll dive deeper into customer ideas and finish the design thinking process by producing designs, prototyping them, and finally testing their validity.

We also want you to join us. To help influence the future of OpenShift, sign up to be notified about research participation opportunities or provide feedback on your experience by filling out this brief survey. If you'd like to attend the next workshop, keep an eye on the OpenShift Commons calendar for upcoming events. Feel free to reach out by email if you have any questions.