Red Hat blog

It’s almost become boring to say that Kubernetes has become boring. This massive open source project has now been in development for so long that the major changes from revision to revision tend to focus on stability, reliability and performance: the sorts of changes that make life easier every day, but do not look so exciting when listed out in a change log.

In truth, nothing in Kubernetes 1.17 will drastically change how you use containers, but they will result in more powerful and dependable architectures, capable of scaling to meet enterprise needs without buckling under pressure. Indeed, Kubernetes is now not only a stable platform for constructing cloud-native infrastructure, it is a stable foundation for the entire ecosystem of services and projects which rely upon it: from Prometheus to Istio to Fluentd to data services layers and Operators.

That’s not to say there aren’t major enhancements in-bound in this release of the platform. One of those new additions, in fact, can directly affect data services – volume snapshots. That new feature is currently in beta with this release, but has been in development for a considerable amount of time.

Volume Snapshots for better backup, data replication and migration

After two years and two alpha implementations, volume snapshots are beta in this release. Volume snapshots allow users to take a point in time snapshot of their data stored on a PersistentVolume and restore it later as a new volume. Snapshots can be used in a wide variety of use cases, from backup to data replication or migration.

Kubernetes can use the snapshot feature of any storage backend that implements Container Storage Interface (CSI) 1.0, which has more than 50 drivers already. It is not tied to a couple of hard coded storage providers as in the first alpha implementation.

The snapshot functionality is in a new external snapshot-controller and csi-snapshotter sidecar. Moving these pieces outside of the kube-controller-manager and kubelet allowed for faster development, better separation of concerns, and proof of the extensibility of the Kubernetes platform.

- snapshot-controller, deployed during Kubernetes installation, manages the whole lifecycle of volume snapshots, including taking snapshots, binding of pre-existing snapshots, and restoring or deleting snapshots.

- csi-snapshotter sidecar, which runs in the same pod as a CSI driver and is deployed by a CSI driver vendor, serves as a bridge between the snapshot controller and the CSI driver and issues CSI requests to the driver, so CSI drivers do not need to worry about Kubernetes internals.

Volume snapshots help users to consume abstract storage resources for more efficient backup, data replication, migration and more, but there is still work to do. In Kubernetes 1.17, functionality is limited to taking and restoring snapshots of a single volume, but we already have plans to add new features in future Kubernetes releases to allow application-consistent snapshots using execution hooks or taking snapshots of whole StatefulSets.

Scheduling changes

Over the past several releases the scheduling special interest group (sig-scheduling) put together a proposal to improve the extensibility of the default Kubernetes scheduler. The current extensions mechanism is very limited, as it only allows two operations to be specified, namely Filtering and Prioritizing. Additionally, it requires invoking external HTTP requests, which might significantly extend the scheduling time, and lower the overall throughput of the scheduler.

The new mechanism will allow plugins to be decoupled from core Kubernetes code, while also allowing easier exchange of information between plugins and the scheduler (such as access to the scheduler cache).

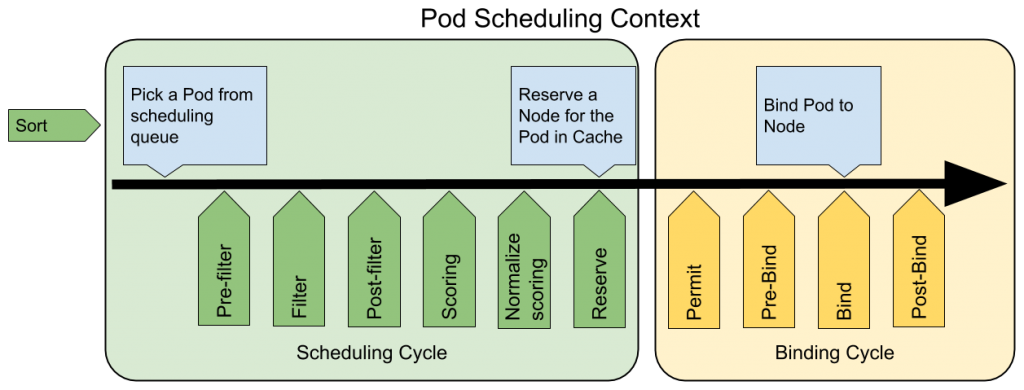

The main extension points depicted in the picture above, are as follows:

- Sorting - responsible for sorting the pods in the scheduling queue.

- Pre-filtering - used for pre-processing the information about pods being currently processed.

- Filtering - the process responsible for matching a pod with a particular set of nodes the pod can run on.

- Post-filtering - used mainly for information, such as to update the plugin internal state. Alternatively, it can be used as a pre-scoring step.

- Scoring - responsible for ranking nodes, scoring has 2 phases:

- Scoring - the actual scoring algorithm

- Normalize Scoring - to update the score from the previous phase to fit within [MinNodeScore, MaxNodeScore] range.

- Reserve - an informational point, which plugins can use to update its internal state after scoring completes.

- Permit - responsible for approving, denying or delaying a pod.

- Pre-binding - performs any work required before binding a pod.

- Binding - assigns a pod to an actual node.

- Post-binding - another informational point, which plugins can use to update its internal state after scoring completes.

More information about the new scheduling framework is available in the KEP.

It’s important to note that the new mechanism is meant not only for the extenders. To improve the maintainability of the main scheduler components over the course of the next few releases sig-scheduling will be rewriting all the pre-existing scheduler logic to follow the new scheduler framework.

Volume snapshots and a new scheduler mechanism are two of many pieces that increase the stability and extensibility of Kubernetes.

Kubernetes 1.17 is expected to be available for download on GitHub this week. This release of Kubernetes is planned to be integrated into a future release of Red Hat OpenShift.

About the author

Red Hatter since 2018, tech historian, founder of themade.org, serial non-profiteer.