Part 2: Rethinking our Application Lifecycle

Moving to OpenShift Container Platform in 2016 spurred us to rethink our application lifecycle. Our various application teams are free to choose their own tooling and processes. But when it came time to move code into production, developers had to wait for Release Engineering to onboard the code to our IT team’s pipeline for validation.

It’s unrealistic to think that our IT team can do everything without slowing down innovation. So we decided to give application teams control over the entire release process—from development through production. To make that work, we needed common tools, common build processes, and standard validation processes for infrastructure, security, and deployment.

We’re well on our way to giving application teams control over release. This post explains what we’ve done so far and touches on future plans.

Our Goal: One CI/CD Pipeline for all Cloud Environments

Red Hat currently has multiple OpenShift Container Platform environments: Several for pre-production, one for primary production, and one for DR production. We’ll soon add OpenShift clusters in AWS, and later might also add clusters in Microsoft Azure and Google Cloud. We wanted a single CI/CD pipeline—what we call a federated pipeline—that could deploy to any cloud environment.

OpenShift Container Platform didn’t have pipeline capabilities when we adopted it in 2016. Therefore, we decided to build our own pipeline, adopting OpenShift pipeline capabilities as they mature. OpenShift makes a gradual transition possible because it integrates well with other tooling via open APIs.

When planning the pipeline, we decided to:

- Abstract the CI/CD pipeline from OpenShift to make deployment consistent across all of our cloud environments—first OpenShift and later the others.

- Use Jenkins as the framework so that developers who aren’t already familiar with it could learn it. Developers don’t have to write their own Jenkins configuration from scratch because we provide Jenkins Job Builder templates.

- Use Ansible by Red Hat and Puppet for platform automation and management. Ansible doesn’t require a software agent on the remote machines it manages. That means it doesn’t consume any resources on the machines when not managing them.

- Use the image-based deployment method with Red Hat Virtualization.

- Later add image scanning and validation using Red Hat CloudForms.

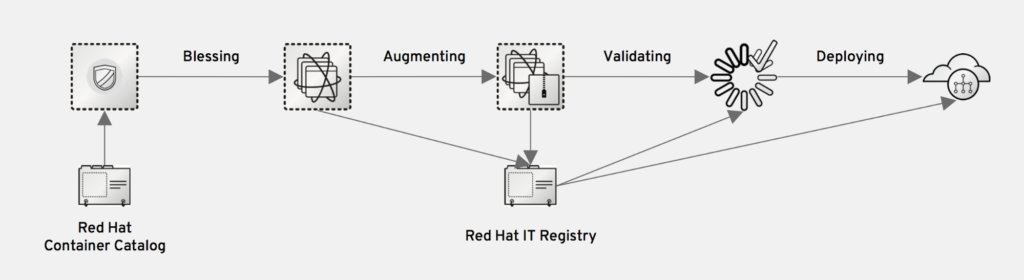

The federated CI/CD pipeline standardizes our Jenkins processes and hooks them into OpenShift. So far we’re using the pipeline to deploy images to all of our OpenShift cloud environments. Later we’ll use the pipeline to also deploy to public clouds. Here’s how it works:

- Developers start with a blessed image from our IT registry. Blessed images have hooks for standards, consistent versions, consistent configurations, and a security policy.

- Developers augment the image to meet the business need, choosing any tooling. Application configuration isn’t an afterthought; it’s integrated into the development process.

- In the open-prototyping phase of the project, applications teams deploy to the pre-production environment to build and test their applications.

- When teams have finished their application prototype, including the augmented image, they validate the prototype against our enterprise security requirements.

- After the prototype passes validation, development teams input their templated application into the standard pipeline tools to create an application CI/CD pipeline.

Many of these concepts and technologies are new to our development teams, so our PaaS team remains heavily involved. As developers gain experience with PaaS, the PaaS team will slowly become less involved in application lifecycle management. Our goal: Deploy as fast as we can code.

Figure 1: Federated CI/CD Pipeline

Outcomes: Deployment is Faster, More Secure, More Consistent

Abstracting CI/CD from OpenShift Container Platform has improved speed, security, and standards—the forces behind innovation.

Speed. Applications teams no longer have to wait for Release Engineering to validate and deploy their images. The pipeline also makes it easier for our IT team to provide time-saving services for developers. In mid-2017 we built a prototype to replace our old performance and development tool in just six weeks from start to production.

Security. Our standard CI/CD pipeline gives us image custody and immutable images throughout the deployment process. We’ve added security validations that images have to pass before they’re deployed into production.

Standards. A standard image lifecycle improves consistency across environments and simplifies application management. Our standard CI/CD validation process strengthens security, increases quality, and speeds up the application lifecycle. Teams now take an active role in operating their applications, while also implementing some of the “gates and guardrails” that are consistent with enterprise security and enterprise architecture guidelines.

Our Next Steps

Our planning is changing as OpenShift evolves. For now, we expect to continue using our abstracted CI/CD pipeline while adopting new OpenShift Container Platform capabilities as they mature. An example is support for multi-cloud deployment. We’re considering the following changes:

- Investigating integrating the pipeline with Ansible Tower by Red Hat. Ansible Tower makes it simpler to manage and run playbooks for different application teams. Application teams can gain even more control over the application lifecycle because Ansible Tower provides federated management and runtime.

- Integrating parts of the pipeline with Red Hat CloudForms to provide reports, dashboards, and metadata from all of our cloud environments. These include Red Hat Virtualization, AWS, OpenStack, Google Cloud Platform, Microsoft Azure, and bare metal. Red Hat CloudForms also provides image scanning and validation.

- Deploying Red Hat JBoss BPM (Business Process Management), Red Hat JBoss Data Virtualization, and Red Hat 3scale API Management on the OpenShift Container Platform.

- Continue improving our federated CI/CD pipeline by adding innovations that our applications teams have added to their own CI pipelines. Examples include load testing, UX testing, and additional security validations.

- Implementing OpenShift Source-to-Image (S2I) to give applications teams more control over deployment.

- Investigating Open Service Broker. We’re intrigued by OSB’s automated project provisioning and dependency IT services such as databases and network ACLs.

Check back for the next blog in this series, describing our evolving infrastructure. To learn about the changes we’ve made to our organization and culture while moving to OpenShift in a multi-cloud environment, read Part 1.

About the authors

Drew McMillen is Senior Manager, IT Compute Services at Red Hat.

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit