Istio Multicluster is a feature of Istio--the basis of Red Hat OpenShift Service Mesh--that allows for the extension of the service mesh across multiple Kubernetes or Red Hat OpenShift clusters. The primary goal of this feature is to enable control of services deployed across multiple clusters with a single control plane.

The main requirement for Istio multicluster to work is that the pods in the mesh and the Istio control plane can talk to each other. This implies that pods need to be able to open connections between clusters.

In a previous article, this concept was demonstrated by connecting OpenShift SDNs with a network tunnel.

Assuming this requirement can be met, either with the above approach or a similar one, the following describes how you can install Istio Multicluster.

And of course the usual disclaimer, the steps explained in this post are not officially supported by Red Hat.

The Architecture

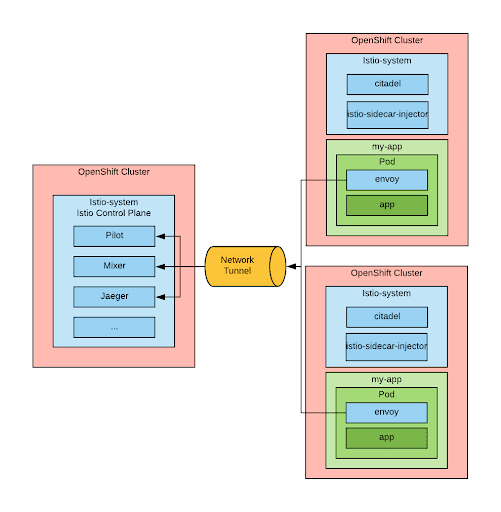

The architecture supporting Istio Multicluster makes use of one Kubernetes cluster hosting the Istio control plane, while the other clusters will only host the Istio Remote components, which consist of:

- Citadel for distributing the certificates.

- The istio-sidecar-injector to inject the Istio sidecar automatically.

The injected sidecars are configured to talk to the main control plane endpoints (this is where IP packet routability between clusters is needed).

In order to inform the Istio control plane that it’s working with remote clusters, a secret must be created and labeled with: istio/multiCluster: 'true' in the istio-system namespace for each additional cluster. The content of the secret must be the kubeconfig file to be used to connect to the remote cluster.

The below picture depicts this architecture:

Per the current installation instructions, the Envoy sidecar containers should be configured to connect directly to the IPs of the control plane pods. This is obviously a limitation, as those IPs are ephemeral. The Istio multicluster documentation provides some suggestions on how to overcome this limitation.

Within the install process proposed here, we can use service IPs because our network tunnel supports that feature. Service IPs are stable and if the control plane were scaled up, they would load balance to one of the instances.

One more thing to consider when implementing this architecture is that the Istio main control plane exists in only one cluster so if that cluster is lost, the Istio mesh essentially freezes. This blog post delves in the details of the consequences of losing each of the component of the Istio control plane.

Installation

The following assumptions are made prior to the installation:

- OpenShift clusters are already deployed and able to route SDN packets between them.

- Istio is installed in one of those clusters.

At this point, you can run an Ansible playbook to install Istio Multicluster.

The inventory should look like this:

clusters:

- name: <cluster1_name>

url: <cluster1_url>:<cluster1_port>

username: admin

password: "{{ lookup('env','password') }}"

istio_control_plane: true

- name: <cluster2_name>

url: <cluster2_url>:<cluster2_port>

username: admin

password: "{{ lookup('env','password') }}"

istio_control_plane: false

This Ansible playbook will install the Istio Remote components to the clusters that do not host the Istio control plane along with deploying the kubeconfig secrets to the cluster with the Istio control plane. In the Ansible inventory, you need to set the cluster where the istio control plane is deployed with the istio_control_plane: true variable. There can be one and only one cluster with this attribute.

More information on the playbook and how to run it can be found here.

Installing bookinfo across multiple clusters

Now that Istio multicluster is ready, we can try to deploy the famous bookinfo application across multiple clusters.

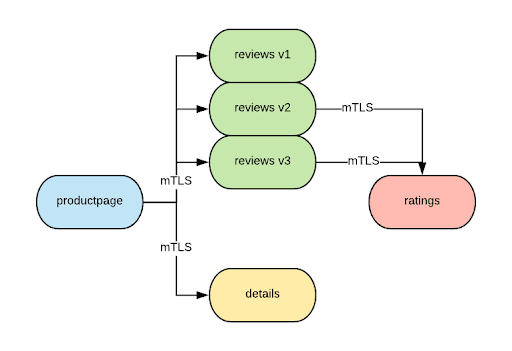

To test the capability of istio multicluster, we are going to have mTLS enabled between all the components of the application and 1-way TLS enabled for inbound traffic.

As we will see, we have quite a few things to configure to the point that I felt like Ansible was needed to complete the configuration. You can find the playbook here.

Configuring inbound traffic

In Istio there is an assumption that all the traffic in and out of the mesh will go through one of the available gateways (ingress, egress). In simplest terms, the gateways mark the edge of the mesh and guarantee that inbound and outbound traffic is compliant with the policies defined in the mesh. We are going to comply with this rule.

We also want to expose the application with 1-way TLS.

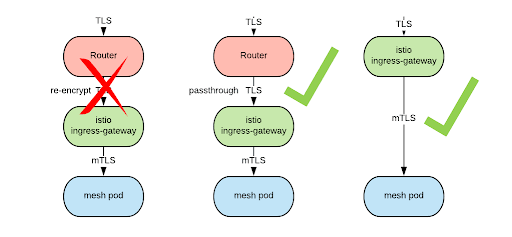

From my experiments, it is not possible to configure a route to handle the inbound traffic and re-encrypt it to the Istio ingress-gateway. This has to do with the fact the Envoy seems to be rejecting the router health check, so from the perspective of the router the application is always down. However, it is possible to create a route with the passthrough termination policy. It is also possible to expose the istio ingress-gateway directly with a load balanced service. In either case we need to supply a certificate to the Istio ingress-gateway pod. In our example we are going to expose the Istio ingress-gateway directly.

For this example, we are also going to create a dedicated Istio ingress-gateway, as opposed to using the ingress-gateway that is created by default in the istio-system namespace. This dedicated Istio ingress-gateway will be created in the bookinfo namespace. Obviously, this will need to be replicated in every OpenShift cluster that we join. The final configuration is represented in the following diagram:

Configuring the Global Load Balancer in order to bring traffic to either one of the endpoints is outside the scope of this post, but here are some initial thoughts on how to do so.

In order to secure the inbound connection, we need to supply a certificate to the istio-ingress as a secret injected into the pod. We cannot use the service serving certificate secret feature to generate this certificate because this is an external facing certificate and this feature only generates certificates valid for use within the cluster. The process for creating that certificate is outside of the scope of this article, but in my automation, I used cert-manager with a self-signed issuer.

The relevant part of the gateway configuration is found below:

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: bookinfo-gateway

spec:

selector:

istio: bookinfo-ingressgateway

servers:

- port:

number: 443

name: https

protocol: HTTPS

tls:

mode: SIMPLE

serverCertificate: /etc/istio/ingressgateway-certs/tls.crt

privateKey: /etc/istio/ingressgateway-certs/tls.key

hosts:

- bookinfo.{{ bookinfo_domain }}

This snippet illustrates that there is a gateway to the mesh that uses the bookinfo-ingressgateway pod that we have deployed (hence the selector), and that the gateway should listen on 443 for TLS connections using the supplied certificates and accepting connection for the bookinfo.{{ bookinfo_domain }} host. The the second part of the configuration is shown below:

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: bookinfo

spec:

hosts:

- bookinfo.{{ bookinfo_domain }}

gateways:

- bookinfo-gateway

http:

- match:

- uri:

exact: /productpage

- uri:

exact: /login

- uri:

exact: /logout

- uri:

prefix: /api/v1/products

route:

- destination:

host: productpage

port:

number: 9080

This states that there is a service (meaning the Istio concept of a service) that can be referred to as the host: bookinfo.{{ bookinfo_domain }} and can be accessed by a connection coming from the bookinfo-gateway. That connection will be forwarded when matching the given URIs. This way, the gateway knows where to forward inbound connections. Notice that an ingress gateway can serve multiple apps, potentially in multiple namespaces. In this case we have opted to create a dedicated gateway.

Configuring mTLS

This part is relatively straightforward. We need to first inform Istio that we want all of our services protected with mTLS:

apiVersion: "authentication.istio.io/v1alpha1"

kind: "Policy"

metadata:

name: "mtls-bookinfo"

namespace: "bookinfo"

spec:

peers:

- mtls: {}

targets:

- name: details

- name: reviews

- name: ratings

- name: productpage

Then, we need to create destination rules so that mTLS will be used when opening connections to our services. Here is an example:

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: productpage

spec:

host: productpage

trafficPolicy:

tls:

mode: ISTIO_MUTUAL

subsets:

- name: v1

labels:

version: v1

The resulting architecture is as follows:

Recall that these resources are Istio-specific, and because the Istio control plane exists only in one cluster, they need to be created in that cluster. They will, however, apply to all the services in the mesh as far as the mesh extends, even across clusters.

We need to take into account this asymmetry when creating multicluster automations.

Considerations on zone-aware routing

A very common requirement for geographical deployments is that when traffic enters a datacenter (or a cluster), it stays there as much as possible. This helps minimize hops between datacenters, which typically increase latency.

This is a concern for Istio multicluster deployments. Currently, I haven’t found a way to enforce this requirement, so, for now, when a load balancing decision is made, any target is as likely to be selected whether it's in the same zone or not.

Envoy supports zone-aware routing, but this feature does not seem to be leveraged by Istio at this point.

I consider this to be a sign of the youth of the project. There are discussions among the Istio community leaders on how to create this feature, so I think this will be eventually implemented.

Conclusion

Istio provides a control plane to control application behavior (mostly network-related). With the multicluster feature, we can extend this behavior across multiple clusters. As we have seen, this feature is emerging (complex application deployment process, no support for zone aware routing), but I believe that under the pressure of customers interested in using it, it will mature quickly.

And remember, Istio multicluster is not currently supported by Red Hat, but it is possible to experiment with it in sandbox environments.

About the author

Raffaele is a full-stack enterprise architect with 20+ years of experience. Raffaele started his career in Italy as a Java Architect then gradually moved to Integration Architect and then Enterprise Architect. Later he moved to the United States to eventually become an OpenShift Architect for Red Hat consulting services, acquiring, in the process, knowledge of the infrastructure side of IT.

Currently Raffaele covers a consulting position of cross-portfolio application architect with a focus on OpenShift. Most of his career Raffaele worked with large financial institutions allowing him to acquire an understanding of enterprise processes and security and compliance requirements of large enterprise customers.

Raffaele has become part of the CNCF TAG Storage and contributed to the Cloud Native Disaster Recovery whitepaper.

Recently Raffaele has been focusing on how to improve the developer experience by implementing internal development platforms (IDP).

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit