Red Hat blog

Think about this: what's something that exists today that will still exist 100 years from now? Better yet, what do you use on a daily basis today you think will be utilized as frequently 100 years from now? Suffice to say, there isn't a whole lot out there with that kind of longevity. But there is at least one thing that will stick around, data. In fact, mankind is estimated to create 44 zettabytes (that's 44 trillion gigabytes, ladies and gentlemen) of data by 2020 . While impressive, data is useless unless you actually do something with it. So now, the question is, how do we work with all this information and how to we create value from it? Through machine learning and artificial intelligence, you - yes you - can tap into data and generate genuine, insightful value from it. Over the course of this series, you'll learn the basics of Tensorflow, machine learning, neural networks, and deep learning in a container-based environment.

Before we get started, I need to call out one of my favorite things about OpenShift. When using OpenShift, you get to skip all the hassle of building, configuring or maintaining your application environment. When I’m learning something new, I absolutely hate spending several hours of trial and error just to get the environment ready. I’m from the Nintendo generation; I just want to pick up a controller and start playing. Sure, there’s still some setup with OpenShift, but it’s much less. For the most part with OpenShift, you get to skip right to the fun stuff and learn about the important environment fundamentals along the way.

And that’s where we’ll start our journey to machine learning(ML), by deploying Tensorflow & Jupyter container on OpenShift Online. Tensorflow is an open-source software library created by Google for Machine Intelligence. And Jupyter Notebook is a web application that allows you to create and share documents that contain live code, equations, visualizations and explanatory text with others. Throughout this series, we’ll be using these two applications primarily, but we’ll also venture into other popular frameworks as well. By the end of this post, you’ll be able to run a linear regression (the “hello world” of ML) inside a container you built running in a cloud. Pretty cool right? So let's get started.

Machine Learning Setup

The first thing you need to do is sign up for OpenShift Online Dev Preview. That will give you access to an environment where you can deploy a machine learning app. We also need to make sure that you have the “oc” tools and docker installed on your local machine. Finally, you’ll need to fork the Tensorshift Github repo and clone it to your machine. I’ve gone ahead and provided the links here to make it easier.

- Sign up for the OpenShift Online Developer Preview

- Install the OpenShift command line tool

- Install the Docker Engine on your local machine

- Fork this repo on GitHub and clone it to your machine

- Sign into the OpenShift Console and create your first project called "<yourname>-tensorshift"

Building & Tagging the Tensorflow docker image: "TensorShift"

Once you’ve got everything installed to the latest and greatest, change over to the directory where you cloned the repo and then run:

Docker build -t registry.preview.openshift.com/<your_project_name>/tensorshift ./

You want to make sure to replace the stuff in "<>" with your environment information mine looked like this

Docker build -t registry.preview.openshift.com/nick-tensorflow/tensorshift ./

Since we'll be uploading our tensorshift image to the OpenShift Online docker registry in our next step. We needed to make sure it was tag it appropriately so it ends up in the right place, hence the -t registry.preview.openshift.com/nick-tensorflow/tensorshift we appended to our docker build ./ command.

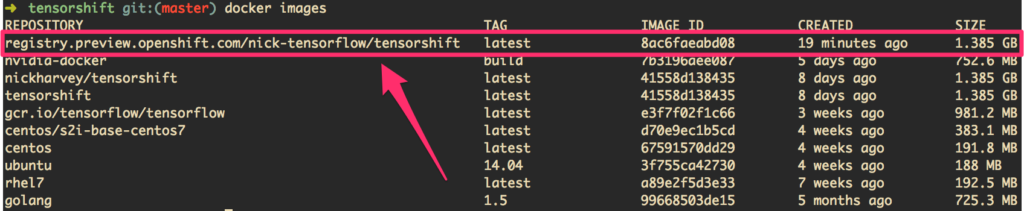

Once you hit enter, you’ll see docker start to build the image from the Dockerfile included in your repo (feel free to take a look at it to see what’s going on there). Once that’s complete you should be able to run docker images and see that been added.

Example output of docker images to show the newly built tensorflow image

Pushing TensorShift to the OpenShift Online Docker Registry

Now that we have the image built and tagged we need to upload it to the OpenShift Online Registry. However, before we do that we need to authenticate to the OpenShift Docker Registry:

docker login -u `oc whoami` -p `oc whoami -t` registry.preview.openshift.com`

All that's left is to push it

docker push registry.preview.openshift.com<a href="http://registry.preview.openshift.com/">/</a><your_project_name>/<your_image_name>

Deploying Tensorflow (TensorShift)

So far you’ve built your own Tensorflow docker image and published to the OpenShift Online Docker registry, well done!

Next, we’ll tell OpenShift to deploy our app using our Tensorflow image we built earlier.

oc new-app <image_name> —appname=<appname>

You should now have a running a containerized Tensorflow instance orchestrated by OpenShift and Kubernetes! How rad is that!

There’s one more thing that we need to be able to access it through the browser. Admittedly, this next step is because I haven’t gotten around to fully integrating the Tensorflow docker image into the complete OpenShift workflow, but it’ll take all of 5 seconds for you to fix.

You need to go to your app in OpenShift and delete the service that’s running. Here's an example on how to use the web console to do it.

Example of how to delete the preconfigured services created by the TensorShift Image

Because we're using both Jupyter and Tensorboard in the same container for this tutorial we need to actually create the two services so we can access them individually.

Run these two oc commands to knock that out:

oc expose dc <appname> --port=6006 --name=tensorboard

oc expose dc <appname< --port=8888 --name=jupyter

Lastly, just create two routes so you can access them in the browser:

oc expose svc/tensorboard

oc expose svc/jupyter

That's it for the setup! You should be all set to access your Tensorflow environment and Jupyter through the browser. just run oc status to find the url

$ oc status

In project Nick TensorShift (nick-tensorshift) on server https://api.preview.openshift.com:443

http://jupyter-nick-tensorshift.44fs.preview.openshiftapps.com to pod port 8888 (svc/jupyter)

dc/mlexample deploys istag/tensorshift:latest

deployment #1 deployed 14 hours ago - 1 pod

http://tensorboard-nick-tensorshift.44fs.preview.openshiftapps.com to pod port 6006 (svc/tensorboard)

dc/mlexample deploys istag/tensorshift:latest

deployment #1 deployed 14 hours ago - 1 pod

1 warning identified, use 'oc status -v' to see details.

On To The Fun Stuff

Get ready to pick up your Nintendo controller. Open <Linktoapp>:8888 and log into Jupyter using “Password” then create a new notebook like so:

Example of how to create a jupyter notebook

Now paste in the following code into your newly created notebook:

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

learningRate = 0.01

trainingEpochs = 100

# Return evenly spaced numbers over a specified interval

xTrain = np.linspace(-2, 1, 200)

#Return a random matrix with data from the standard normal distribution.

yTrain = 2 * xTrain + np.random.randn(*xTrain.shape) * 0.33

#Create a placeholder for a tensor that will be always fed.

X = tf.placeholder("float")

Y = tf.placeholder("float")

#define model and construct a linear model

def model (X, w):

return tf.mul(X, w)

#Set model weights

w = tf.Variable(0.0, name="weights")

y_model = model(X, w)

#Define our cost function

costfunc = (tf.square(Y-y_model))

#Use gradient decent to fit line to the data

train_op = tf.train.GradientDescentOptimizer(learningRate).minimize(costfunc)

# Launch a tensorflow session to

sess = tf.Session()

init = tf.global_variables_initializer()

sess.run(init)

# Execute everything

for epoch in range(trainingEpochs):

for (x, y) in zip(xTrain, yTrain):

sess.run(train_op, feed_dict={X: x, Y: y})

w_val = sess.run(w)

sess.close()

#Plot the data

plt.scatter(xTrain, yTrain)

y_learned = xTrain*w_val

plt.plot(xTrain, y_learned, 'r')

plt.show()

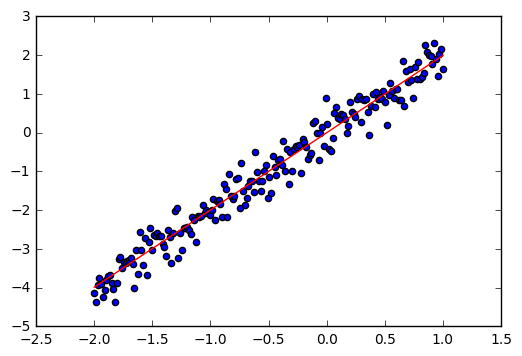

Once you've pasted it in, hit ctrl + a (cmd + a for you mac users) to select it and then ctrl + enter (cmd + enter for mac) And you should see a graph similar to the following:

Let’s Review

That’s it! You just fed a machine a bunch of information and then told it to plot a line that fit’s the dataset. This line shows the “prediction” of what the value of a variable should be based on a single parameter. In other words, you just taught a machine to PREDICT something. You’re one step closer to Skynet - uh, I mean creating your own AI that won't take over the world. How rad is that!

In the next blog, will dive deeper into linear regression and I’ll go over how it all works. We’ll also and feed our program a CSV file of actual data to try and predict house prices.