Red Hat blog

This is the fourth installment of the “How Full is my Cluster” series. Previously, we explored how Red Hat OpenShift manages scheduling and resource allocation, how to protect nodes from overcommitment and finally, some general considerations on how to create a capacity management plan.

Given that these articles were originally written more than a year ago, portions may have changed, but the general guidance is still valid. In particular, the recommendation around always specifying the memory and CPU requests for containers is still absolutely relevant as it helps Kubernetes’ scheduler make correct placement decisions.

While explaining these concepts to customers, one question inevitably arises: if there is a recommendation that every container specify a value for memory and CPU request, what is the suggested value?

In this article I will provide an approach to answering that question.

Determining the right size for pods.

Determining the proper values for pod resources is challenging. In an organization, it can be difficult to identify a team that has the proper insights regarding the resource requirements in production for a given application. There are several reasons for this impasse.

- In a pre-container world, this question was not as crucial. Applications run in VMs, and these VMs are usually oversized and not finely tuned to the application needs, thus creating resource waste.

- Proper applications resource management is relevant only in production and load test environments. Development teams, which are the best candidates for understanding the needs of their applications, are usually removed from those environments.

- Sometimes the application is not observable enough to allow for the proper estimation of the required resources. This occurs infrequently, but there are still situations where an Application Performance Management (APM) platform is not implemented.

- Even if the development team was empowered to see the relevant environments and the right observability tools were in place, it would still be difficult to correctly estimate the application resource needs because these needs change. The resource needs change over time because of changes in the load profile (for example increased adoption) and because new features are added/change affecting resource usage.

Enter the Vertical Pod Autoscaler

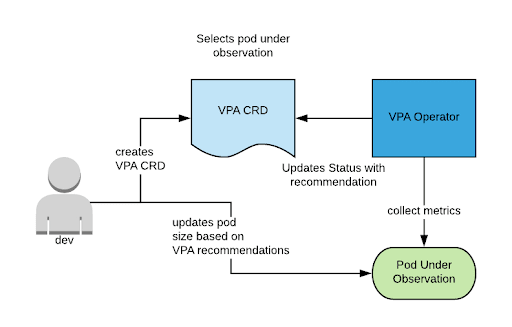

Fast forward to today, and translating the idea that the cluster should become aware of the necessary compute resources required into Kubernetes concepts, a controller can be used to perform this task on a set of a set of pods and provide its best recommendation for memory and cpu (and potentially other metrics).

This is what the Vertical Pod Autoscaler (VPA) does.

VPA is a controller that can be configured to observe a set of pods through the use of a Vertical Pod Autoscaler Custom Resource Definition (CRD). An example is shown below:

apiVersion: autoscaling.k8s.io/v1beta1

kind: VerticalPodAutoscaler

metadata:

name: vpa-updater-test

spec:

selector:

matchLabels:

app: vpa-updater

Notice how the label selector is used to select the set of pods that should be observed.

After a relatively short observation time, the VPA CRD will start to present a recommendation in the status field as follows:

status:

conditions:

- lastTransitionTime: 2018-12-28T20:15:11Z

status: "True"

type: RecommendationProvided

recommendation:

containerRecommendations:

- containerName: updater

lowerBound:

cpu: 25m

memory: 262144k

target:

cpu: 25m

memory: 262144k

uncappedTarget:

cpu: 25m

memory: 262144k

upperBound:

cpu: 3179m

memory: "6813174422"

| Field | Description |

| Lower bound | Minimal amount of resources that should be set for the container. |

| Upper bound | Maximum amount of resources that should be set (above which you are likely wasting resources). |

| Target | VPA’s recommendation based on the algorithm described here and considering additional constraints specified in the VPA CRD. The constraints are not shown in the above VPA example. They allows you to set minimum and maximum caps to VPA’s recommendation, see here for more details. |

| Uncapped target | VPA’s recommendation without considering any additional constraints. |

VPA can actually work in three different modes:

- Off: VPA just recommends its best estimate for resources in the status section of the VerticalPodAutoscaler CRD.

- Initial: VPA changes the container resource section to follow its estimate, when new pods are created.

- Auto: VPA adjusts the container resource spec of running pods, destroying them and recreating them (this process will honor the Pod Disruption Budget, if set).

Recommendation: as VPA is still maturing, always set VPA in Off (recommendation-only) mode for the workload for which you are looking to assess. Then, have a process in place for reviewing the provided recommendation and determine whether it should be applied to the application for the next release. Here is a representation of the recommended workflow:

VPA Installation

An installer for VPA on Red Hat OpenShift is available using a Helm template and the instruction can be found here.

Conclusion

VPA is a tool that you can utilize to assess workload needs in terms of resource consumption of applications. Given that the project is still in the initial phases, I recommend using it as another assessment tool. As the VPA project matures from its beta status, it should gain features and stability where it can be safer to enable the automatic adjustment of container sizes at runtime.

About the author

Raffaele is a full-stack enterprise architect with 20+ years of experience. Raffaele started his career in Italy as a Java Architect then gradually moved to Integration Architect and then Enterprise Architect. Later he moved to the United States to eventually become an OpenShift Architect for Red Hat consulting services, acquiring, in the process, knowledge of the infrastructure side of IT.