Red Hat OpenShift Dedicated has evolved as an effective way to consume OpenShift as a managed service in the public cloud. As we continue to collect feedback from customers, partners, and internal users, we’re excited to be able to present some substantial improvements to the offering, effective this month. I want to focus mainly on the new options available for new OpenShift Dedicated clusters, along with new features that are now available for all OpenShift Dedicated deployments.

New OpenShift Dedicated Cluster Options

Multi-AZ (Stretch) Clusters

New OpenShift Dedicated clusters can now be deployed to either a single Availability Zone (Single-AZ) or distributed across three AZs (Multi-AZ). While there are some performance advantages to having one or more Single-AZ clusters, a Multi-AZ cluster provides maximum availability for a single OpenShift Dedicated cluster in a single Region.

What are stretch clusters?

Up until now, OpenShift Dedicated clusters have only been deployed in a single AZ. This was done in order to maximize cluster performance and simplify capacity planning and application configuration for the customer.

New Multi-AZ clusters can be deployed to AWS Regions that have at least three AZs. This allows for the control plane to be distributed with one node in each AZ (one master and one infra node in each AZ). In the event of an AZ outage, etcd quorum is not lost and the cluster can continue to operate normally.

In addition, the application nodes will also be distributed evenly across AZs. Any new Multi-AZ cluster comes with a minimum of nine application nodes (three per AZ) and new nodes must be added in groups of three (one per AZ). This allows application pods to be deployed across multiple nodes in multiple AZs for maximized service availability.

Multi-AZ Cluster Considerations

Capacity planning

Although capacity planning-- both for overall cluster capacity and disaster avoidance purposes-- is crucial regardless of node distribution, the math changes a bit when accounting for the possibility of an AZ outage. At a high level, in order to architect for an AZ outage, enough resources must be free at all times in the remaining AZs to accommodate the potential for lost resources. In addition, the size and configuration of individual applications can change the math, as larger or more complex services may require special consideration.

Persistent volumes

Persistent volumes (PVs) in OpenShift Dedicated are backed by EBS. AWS does not allow for EBS volumes to ‘move’ across AZs--even though the pods will attempt to move, the PV itself cannot be attached to new pods in a different AZ and the pod would not deploy correctly. This means that if an application is using a single PV in a single AZ, there could be an outage if that AZ should go down. The best way around this is to architect applications so that PVs are replicated across AZs or to use a highly available database service, such as RDS, instead of a single PV for critical applications.

New compute node types and sizes

Additional node types and sizes provide more flexibility for OpenShift Dedicated and allow for larger and more diverse workloads on OpenShift Dedicated clusters.

Previously, compute nodes were a single type and size (m5.xlarge). New clusters can now choose from three high-level instance types (general purpose, memory-optimized, and compute-optimized) and a total of seven instance options.

The compute node instance type is chosen on cluster creation, and all compute nodes will be the same type on a single cluster. This cannot be changed once the cluster has been deployed.

Bring your own cloud account (BYOC)

Finally, there is now an option to deploy OpenShift Dedicated to a customer-owned AWS account. This allows a customer to leverage existing AWS contracts and pricing, as well as use existing security profiles. This should make life a bit easier for teams that want the benefits of OpenShift Dedicated, but already have existing infrastructure on AWS that they would like to take advantage of.

New OpenShift Dedicated Features

In addition to the options available for new OpenShift Dedicated clusters, a number of features have also been introduced for all OpenShift Dedicated clusters that are running OpenShift Container Platform 3.11 or later.

Cluster Administrator Console

Included as part of the OCP 3.11 upgrade, a new console optimized for OpenShift Dedicated administrators is available to allow for greater visibility and control of an OpenShift Dedicated cluster. This should be a welcome addition for teams trying to track down the root cause of a complex, microservices-entangled issue.

A dedicated-admin or dedicated-reader will be able to see all objects on a cluster, along with visibility into cluster and node usage, access control, and cluster event streams. A normal user will be limited to information restricted to the projects they can access on the cluster.

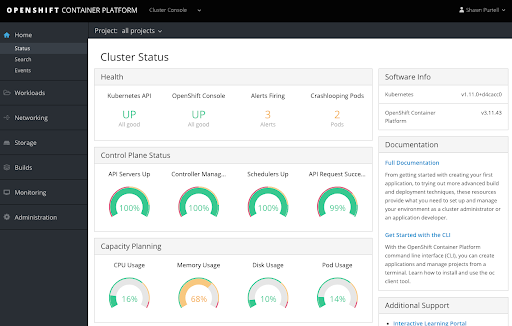

Cluster status dashboard

This new admin console view is integrated with Prometheus, and provides increased visibility into an OpenShift Dedicated cluster’s status, along with a high-level view into resource usage across the cluster. Only dedicated-admins and dedicated-readers will be able to see this new cluster status page.

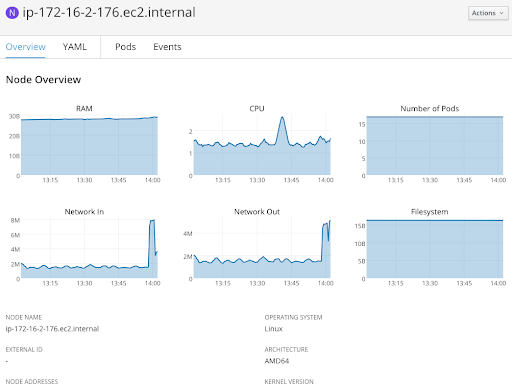

Node and project visibility

More granular views at the node and project levels are also available for dedicated-admins and dedicated-readers. Normal users will not be able to view node status and usage but will be able to see information related to the projects they can access on the cluster.

Additional Cluster Console Features

- Containers as a service: Kubernetes objects across Networking, Storage, and Projects for the entire cluster will be viewable and in many cases can be edited and deleted directly from the console.

- Access control management: The cluster console includes a way to more easily view users, roles, and role bindings across the cluster. Project administrators will be able to self-manage project roles.

- Cluster-wide event stream: This allows visibility into events related to the projects that a user can access, along with the ability to search for specific events or filter by type.

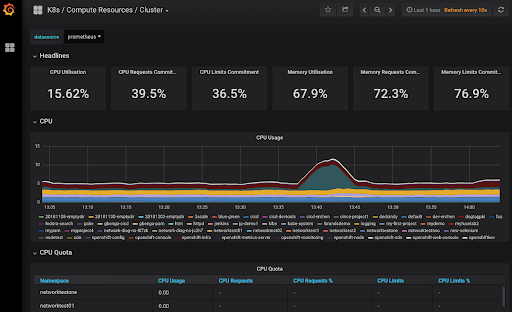

- Grafana dashboards: Finally, new grafana dashboards are available to allow for greater visibility into cluster usage, along with summary reports for all nodes and projects on the cluster. This view also shows both utilization and limit/request commitments to help better manage and monitor cluster capacity.

Persistent volume encryption

All new persistent volumes created on OpenShift Dedicated clusters are now encrypted at rest.

Load Balancer services

Load balancers for use with individual services can now be purchased for use with OpenShift Dedicated clusters. The primary benefits of these load balancer services is that they enable non-http/SNI ingress traffic into the cluster and the use of nonstandard ports for individual services. This allows a more flexible environment for complex network services architectures.

Dedicated admin updates

The capabilities of the dedicated-admin role has been expanded for OpenShift Dedicated clusters. In addition to the cluster console mentioned above, the following things have been added to the role:

Modify default project templates: admins can now directly update the default project template used for each cluster.

Manage elevated serviceaccount permissions: admins can now create serviceaccounts that have either dedicated-admin or dedicated-reader access.

What’s Next?

To learn more about the new pricing and features in Red Hat OpenShift Dedicated, please visit https://www.openshift.com/products/dedicated/.

About the author

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit