Red Hat blog

OpenShift and Kubernetes, among other things, provide a complete container runtime environment. As part of understanding any runtime environment, it's important to know the expectations for building highly available applications. Thankfully, OpenShift and Kubernetes make this quite simple.

Scale

Build your application components (e.g. web framework, messaging tier, datastore) to scale and always scale them to at least 2 pods. OpenShift's default scheduler configuration will make sure those two pods end up on separate nodes. This is the minimum requirement to make sure an upgrade or maintenance update can work on 1 node at a time and not cause any application component downtime.

If an application component needs to satisfy a minimum throughput requirement that can't be met with only 1 pod, you'll need to scale that component to the point where removing 1 pod will leave enough compute power to satisfy demand. Using horizontal pod autoscaling is also a good way to ensure you always have enough capacity.

Building your application components to scale can be difficult, especially if a component requires state. As a general rule, it's best to avoid shared storage for applications that desire scale. Shared storage can be a pain point when dealing with concurrency as well as a performance bottleneck.

However, for application components that require unique persistent storage for each pod, we've introduced a new tech preview feature called StatefulSets in OpenShift 3.4 to help alleviate most of the complex, state-related problems. The primary innovation of StatefulSets is they give an identity to each pod of the set that corresponds to that pod's persistent volume(s). If a pod of a StatefulSet is lost, a new pod with the same virtual identity is reinstantiated and the associated storage is reattached. This process mirrors what happens manually in most situations today and makes it easier than ever to run stateful applications at scale.

Of relevance, there is currently a feature being developed in Kubernetes to provide more advanced capabilities for pods to declare their minimum availability. The feature is called pod disruption budget and is currently due to be available in OpenShift 3.6.

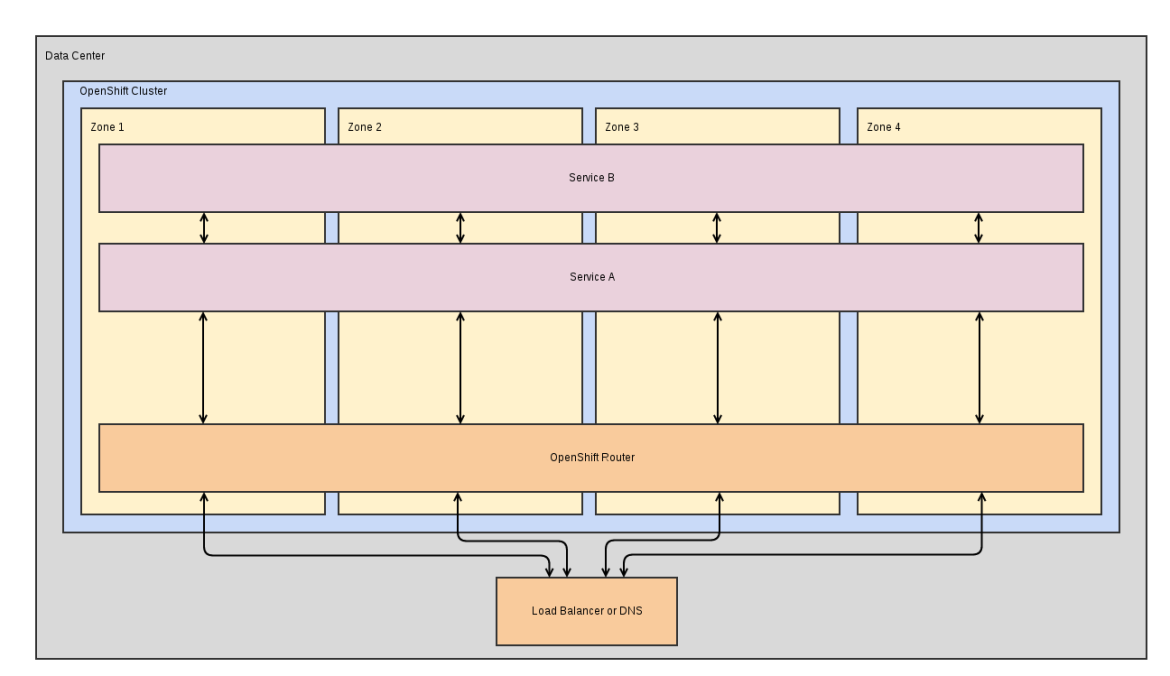

Topology

As a cluster admin, you'll want to set predicates, priorities, and node labels to let applications take advantage of the HA characteristics of your cluster topology. More info on scheduling and topology options can be found in one of my previous posts.

As a user, you should be aware of what topology options are available to you as well as how your application may behave with different topologies. Some questions you might want to ask are:

- Can you handle traffic within the pods of a service crossing zones (e.g. datastore replicaset)?

- Can you handle traffic from one service talking to another service crossing zones (e.g. web framework -> datastore)?

You can replace zone in those questions with rack or region or any other division that's offered. After answering these questions, the work required may be very little. If, for example, you are only dealing with multiple zones and you can live with the default configuration (Ex: anti-affinity for pods within a service), then you can accept the defaults and know that all your pods will be spread nicely. But if you require a copy of your application stack in each zone, you're going to need to create multiple services (1 for each zone) and set the corresponding zone label selector on each copy of your application stack. With most cloud providers, the latency between zones is quite low and it's possible for many applications to operate fine with service zone anti-affinity to get a high level of HA with fairly little work.

Tuning

When deploying your application, it's important to tune based on memory and cpu consumption. Images provided by OpenShift will auto-tune themselves based on how much memory they are allocated, so you'll simply need to allocate enough for your application to function properly. If you are building a custom image, you'll be responsible for auto-tuning your application runtime based on the resource limits set. More details can be found here.

Application Health

To ensure OpenShift is able to recognize when your application is healthy, liveness and readiness probes can be used detect when a pod is in a bad state and should be taken out of (or never added into) rotation. Liveness and readiness checks are implemented as either HTTP calls, container executions, or TCP socket calls and can contain any sort of custom logic to validate an application component is healthy.

Wrap Up

High availability is a complicated topic and I have only addressed how to think about HA with OpenShift and Kubernetes. Hopefully, if you desire HA, you have already thought through the myriad of complications when dealing with HA for your application. What OpenShift/Kubernetes adds to the equation should only make things easier.