Red Hat blog

Introduction

In this series we are going to discuss deploying OpenShift cluster on development boards, specifically MinnowBoards. You might be asking, why the hell would I do that? Well, there are some benefits. First, they do have much lower power consumption. In my case, I am using Minnowboards, with light demand, one board takes approximately 3W-4W. Running a cluster of 4 boards including a switch takes 17W, deploying and starting 10 containers adds 1W. But yeah, that does not include fast disks. But that will come as well. Next benefit is the form factor. My cluster of four boards has dimensions of 7.5cm x 10.0cm x 8cm, about the size of a pack of credit cards. Quite a powerful cluster that can fit pretty much anywhere. The small size bring another benefit - mobility. Do you need computer power on the go? Well, this kind of boards can help solve your problem. Anyway, let’s get on with it.

Why am I using Minnowboards?

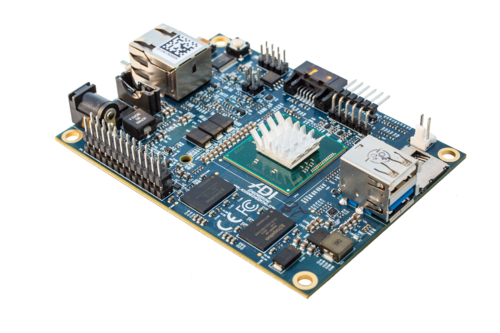

So you may have never heard about Minnowboards? No problem, that’s OK! Have you heard about Raspberry Pi? Yes, you have. Well Minnowboard is quite similar, but from my perspective, much more interesting.

The biggest benefit is that it’s a normal x86-64 computer. No need for special version of your distribution. No need for specially compiled software. Just run anything you are already running on your server or on your laptop or desktop. Next, it has 2GB of memory. Sometimes people ask me, why don’t use cheaper ARM based board? Well, essentially I prefer x86 based systems and when you check how much 2GB ARM boards cost, it’s the same as Minnowboard, which kills the price argument. The next thing is, most ARM based boards provide only 100MBit ethernet, while Minnowboard provides 1Gbit. The other thing people say is that ARM needs less energy and is more effective, well, when you check the “nicer” boards, they have pretty much the same requirements as the Minnowboards.

Note: the only competitor I know of is ODROID-X4, it matches all the specs and is slightly cheaper. Though, still, there is the disadvantage of being ARM based.

So what’s the problem with ARM? Well, OpenShift is currently developed for Intel based systems. And the answer is Yes, it should be possible to run OpenShift on ARM, but you would need to compile it for that platform and as well you would need to rebuild all Docker containers for ARM. It’s possible, but it’s a lot of work, and until OpenShift has official support for ARM, I am going to stick with Intel based systems and with official OpenShift builds and official OpenShift containers.

The cluster

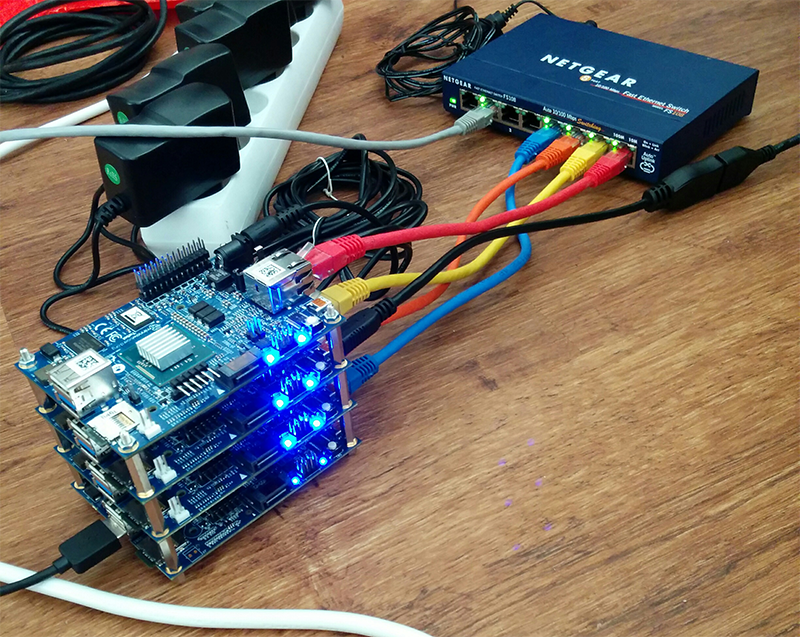

Now, it’s time to take a look what the cluster really looks like.

As you can see there are 4 boards in the cluster. Going bottom-up there is router (which I explain below), master and two nodes. All four boards are connected with the 1Gbit port to the internal network, in this picture the internal network is represented by my old switch. The router board also has 1Gbit ethernet USB adapter that connects the cluster to the external network / internet.

The other black cable plugged into the one of the boards is an HDMI adapter. Unfortunately I still have not solved the power supply problem of having 4 power adapters (5V, 2A) connected to the power grid. I need to come up with a solution and find a power supply that would require only one adapter and would be able to power at least these 4 boards. I have not found one yet, but if you know of one please leave me a comment below.

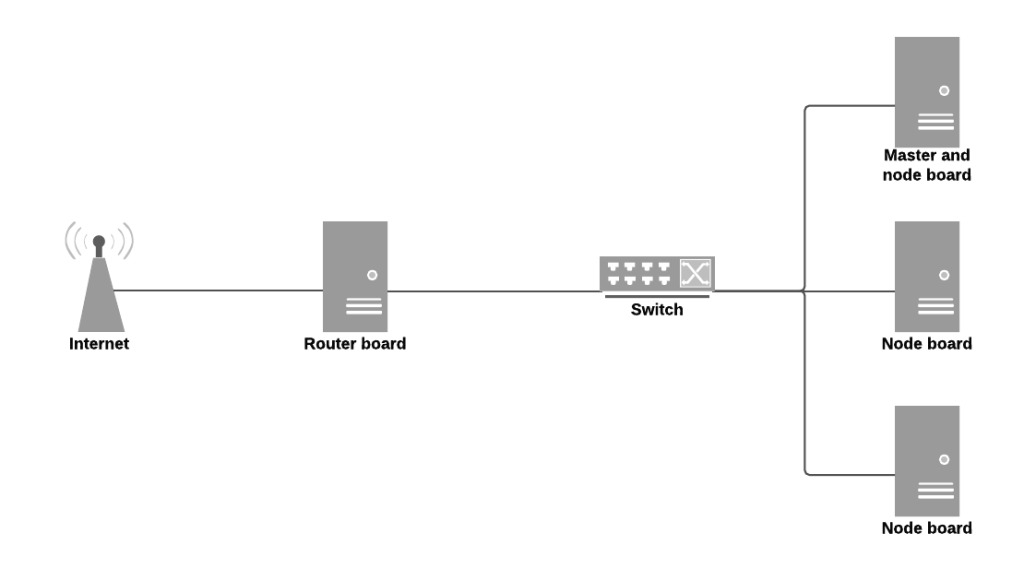

Architecture

Router

The bottom-most board, as mentioned, is the cluster router. It provides the cluster with basic services and takes care of the internal network. It provides these particular services

- Gateway

- DHCP

- DNS

- Ansible installer

Eventually the router should also provide PXE so we are going to need to add TFTP into the mix. With PXE boot the nodes would boot using an image provided by the router. But that is not there, yet, just plans for the future.

Master

The next board serves as OpenShift master and an infrastructure node. There are at least two essential services provided by containers running on the master. First of those is the registry. Whenever OpenShift builds some docker container, it needs a place to save it to. For this purpose, there is an internal registry, that provides such a service to the platform. The other service you need is the OpenShift router (as opposed to the board described above). This router provides access from the internal network to the virtual OpenShift network.

Nodes

The other two boards serve as regular OpenShift nodes. These are used simply for deploying your own containers. Whenever you tell OpenShift to deploy and start a container it will happen on one of those two nodes. The nodes also run the Kubelet which communicates with the Master to make sure everything is running properly.

User experience

To allow us to use URLs that can be resolved by a browser outside of the cluster (on the internal network - i.e. my laptop connected to my switch), we need to do some DNS work. The DNS server is configured to provide consistent DNS experience. With my setup I can use the OpenShift command line tools, web console, and my applications at *.router.default.svc.cluster.local It also provides wildcard dns resolution for the deployed and exposed services.

For the all-in-one virtual machine we are using the service called xip.io but that requires an internet connection and is a dependency on a service that I have no control over. The internal DNS server provides much smoother and reliable experience.

Conclusion

Today we discussed what the cluster looks like and its architecture. In the next blog post we are going to install the software on the router board which will provide us with an environment to start installing the OpenShift master and nodes. I would love to get some feedback on this endeavour and in case you have any questions feel free to ask in the comments as well.