Jenkinsfiles have only become an integral part of Jenkins since version 2 but they have quickly become the de-facto standard for building continuous delivery pipelines with Jenkins. Jenkinsfile allows defining pipelines as code using a Groovy DSL syntax and checking it into source version control which allows you to track, review, audit, and manage the lifecycle of changes to the continuous delivery pipelines the same way that you manage the source code of your application.

Although the Groovy DSL syntax which is referred to as the scripted syntax is the more well-known and established syntax for building Jenkins pipelines and was the default when Jenkins 2 was released, support for a newer declarative syntax is also added since Jenkins 2.5 in order to offer a simplified way for controlling all aspects of the pipeline. Although the scripted and declarative syntax provides two ways to define your pipeline, they both translate to the same execution blocks in Jenkins and achieve the same result.

The declarative syntax in its simplest form is composed of an agent which defines the Jenkins slave to be used for executing the pipeline and a number of stages and each stage with a number of steps to be performed.

pipeline {

agent {}

stages {

stage('Build') {

steps{

...

}

}

}

}

OpenShift has always provided a tight integration with Jenkins through the OpenShift Pipeline Plugin which comes pre-installed on the certified Jenkins image available on OpenShift and can be installed on any Jenkins server. The OpenShift Pipeline Plugin provided a number of steps that can be used to perform operations such as build, deploy, etc. on applications deployed on OpenShift. Although useful, the OpenShift Pipeline Plugin was originally designed for Jenkins 1.x build steps and supported a subset of operations needed for interacting with OpenShift in a continuous delivery pipeline.

The OpenShift Client (DSL) Plugin is the next generation of the OpenShift Pipeline plugin which aims to provide a readable and comprehensive fluent syntax for interacting with OpenShift and mirrors the full functionality of the OpenShift command line interface (CLI) through the use of the OpenShift CLI, which is a significant advantage.

Although the OpenShift Client Plugin works also with Jenkins scripted pipelines syntax, combining it with the declarative pipeline syntax provides a powerful combination that is both easy to read and write and also capable of performing complex operations across multiple OpenShift clusters. An example of that combination is the following pipeline that deploys a geospatial Spring Boot application called MapIt on OpenShift:

pipeline {

agent any

stages {

stage('Build') {

when {

expression {

openshift.withCluster() {

return !openshift.selector('bc', 'mapit-spring').exists();

}

}

}

steps {

script {

openshift.withCluster() {

openshift.newApp('redhat-openjdk18-openshift:1.1~https://github.com/siamaksade/mapit-spring.git')

}

}

}

}

}

}

Using the fluent syntax provided by the OpenShift Client Plugin the pipeline first checks if the application is already created and if not, it would first create the application on OpenShift. Then it would build the application from the source code on GitHub using OpenShift Source-to-Image (S2I) and then deploys it.

The fluent syntax makes it trivial to query OpenShift about applications and adapt the pipeline behaviour accordingly. The following is a more complex example that builds the application using Maven and then deploys the app into the Dev and Stage environments:

pipeline {

agent {

label 'maven'

}

stages {

stage('Build App') {

steps {

sh "mvn install"

}

}

stage('Create Image Builder') {

when {

expression {

openshift.withCluster() {

return !openshift.selector("bc", "mapit").exists();

}

}

}

steps {

script {

openshift.withCluster() {

openshift.newBuild("--name=mapit", "--image-stream=redhat-openjdk18-openshift:1.1", "--binary")

}

}

}

}

stage('Build Image') {

steps {

script {

openshift.withCluster() {

openshift.selector("bc", "mapit").startBuild("--from-file=target/mapit-spring.jar", "--wait")

}

}

}

}

stage('Promote to DEV') {

steps {

script {

openshift.withCluster() {

openshift.tag("mapit:latest", "mapit:dev")

}

}

}

}

stage('Create DEV') {

when {

expression {

openshift.withCluster() {

return !openshift.selector('dc', 'mapit-dev').exists()

}

}

}

steps {

script {

openshift.withCluster() {

openshift.newApp("mapit:latest", "--name=mapit-dev").narrow('svc').expose()

}

}

}

}

stage('Promote STAGE') {

steps {

script {

openshift.withCluster() {

openshift.tag("mapit:dev", "mapit:stage")

}

}

}

}

stage('Create STAGE') {

when {

expression {

openshift.withCluster() {

return !openshift.selector('dc', 'mapit-stage').exists()

}

}

}

steps {

script {

openshift.withCluster() {

openshift.newApp("mapit:stage", "--name=mapit-stage").narrow('svc').expose()

}

}

}

}

}

}

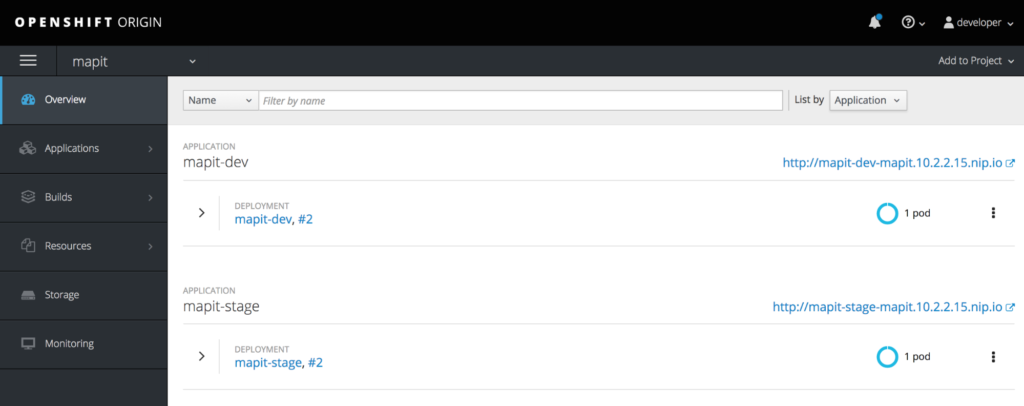

The agent block specifies that Jenkins should run this pipeline on a Jenkins slave node with maven label which points at Maven support on that specific slave node. The Build App stage clones the source code for the application from GitHub and builds a JAR archive using Maven. Since this application is a Spring Boot application it can directly run on a Java runtime. The Build Image stage, uses the produced JAR archive and lays it over the certified OpenJDK image in OpenShift to build an immutable container image for the MapIt application. When the MapIt image is ready, in the Deploy DEV stage it gets tagged for DEV and deployed in the DEV environment. In the Deploy STAGE stage, the MapIt image which was built previously and deployed into the DEV environment gets promoted into the STAGE environment via tagging it as a stage image and gets deployed for the test purposes.

Following the build-only-once principle, this MapIt JAR archive and the MapIt container image is built only once throughout the pipeline and gets promoted across environments to make sure all tests take place to the exact same version of the application.

You can easily run the above pipeline on your local workstation using Minishift ( > 1.7.0) which gives you a local OpenShift cluster for development purposes. Install Minishift using the Minishift Installation Guide and then configure the pipeline using the following commands:

$ minishift addon enable xpaas

$ minishift start --memory 4096 --openshift-version=v3.7.0-rc.0

$ oc login -u developer

$ oc new-project mapit

$ oc new-app https://github.com/siamaksade/mapit-spring.git --strategy=pipeline

The --strategy=pipeline instructs OpenShift to use the Jenkinsfile available in the root of the specified Git repository as the pipeline definition. This allows managing the versions of the pipeline together with the application code. Every invocation of pipeline would use the latest version of the Jenkinsfile available in the specified Git repository which allows updating the pipeline definition directly through the code repository instead of in OpenShift or Jenkins.

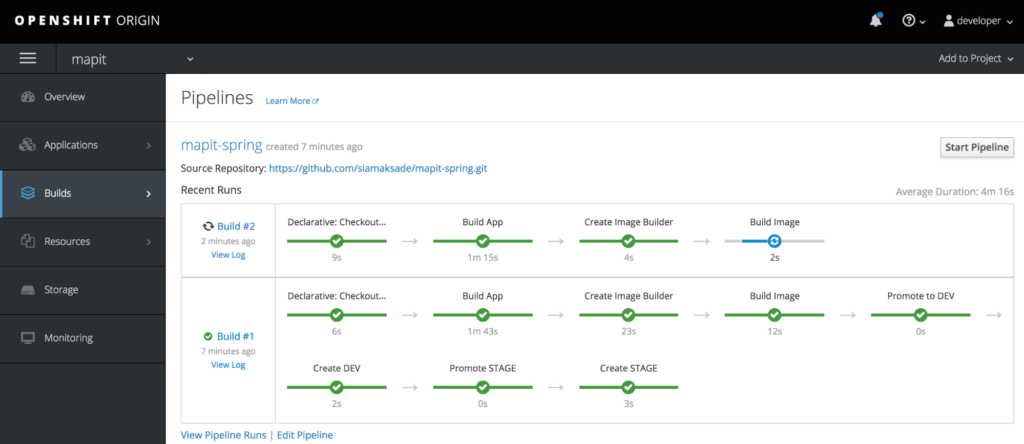

Go to OpenShift Web Console and then Builds → Pipelines to see the MapIt pipeline progressing and deploying the MapIt application into DEV and STAGE.

Conclusion

OpenShift provides tight integration with Jenkins to facilitate building continuous delivery pipelines. The new OpenShift Client (DSL) Plugin enhances this integration by providing a fluent and comprehensive syntax for interacting with OpenShift through a continuous delivery pipeline and exposes the full OpenShift CLI functionality via a Jenkinsfile. Furthermore, it simplifies building complex pipelines that interact with multiple projects across multiple OpenShift clusters and allows querying OpenShift and adapting the pipeline behaviour based on the current status of applications on OpenShift.

About the author

Browse by channel

Automation

The latest on IT automation that spans tech, teams, and environments

Artificial intelligence

Explore the platforms and partners building a faster path for AI

Open hybrid cloud

Explore how we build a more flexible future with hybrid cloud

Security

Explore how we reduce risks across environments and technologies

Edge computing

Updates on the solutions that simplify infrastructure at the edge

Infrastructure

Stay up to date on the world’s leading enterprise Linux platform

Applications

The latest on our solutions to the toughest application challenges

Original shows

Entertaining stories from the makers and leaders in enterprise tech

Products

- Red Hat Enterprise Linux

- Red Hat OpenShift

- Red Hat Ansible Automation Platform

- Cloud services

- See all products

Tools

- Training and certification

- My account

- Developer resources

- Customer support

- Red Hat value calculator

- Red Hat Ecosystem Catalog

- Find a partner

Try, buy, & sell

Communicate

About Red Hat

We’re the world’s leading provider of enterprise open source solutions—including Linux, cloud, container, and Kubernetes. We deliver hardened solutions that make it easier for enterprises to work across platforms and environments, from the core datacenter to the network edge.

Select a language

Red Hat legal and privacy links

- About Red Hat

- Jobs

- Events

- Locations

- Contact Red Hat

- Red Hat Blog

- Diversity, equity, and inclusion

- Cool Stuff Store

- Red Hat Summit